- | 8:00 am

Adobe is supercharging the PDF with AI, and looking more like Microsoft Office 365 in the process

In an exclusive conversation, Adobe designers explain how LLMs and PDFs will combine for a new Acrobat experience.

Since first launching in 1993, the PDF has always been at least a little bit frustrating. On one hand, it’s a pixel-accurate container for just about any mix of imagery and type, and a reliable standard for complex multimedia documents of all sorts. On the other, that content has always felt stuck behind glass—ostensibly uneditable and hard to parse. Opening a 15-page PDF can feel as daunting as a 500-page novel.

But now, Adobe believes they’ve cracked it—at least a bit—with the help of modern AI. In an update to Acrobat launching today, the company is introducing AI capabilities that will help you deep dive into a PDF in seconds, summarizing its insights, reformatting data visualizations into hard numbers, and even citing its knowledge to answer your very specific questions. (Adobe’s free PDF software, Reader, will get some of these new AI features, too.)

In a demo of the technology I was shown prerelease, I watched as natural language questions sliced and diced through a 100+ page annual report, listing revenue figures and competitive risks on demand. The PDF suddenly felt like a book that could answer questions about itself. And while technically the system sits atop Microsoft/OpenAI’s conversational LLM technology, I could actually see how Adobe may be positioning itself as a competitor to platforms like Office 365 in the future.

“How you make sense of your personal documents and all of your information stored in PDFs across your organization are very different [problems], and you can imagine huge value with both of those,” says Eric Snowden, VP of design at Adobe.

THE NEW ACROBAT IS AN OLD GOAL

The PDF has been a vexing technical and design challenge at Adobe for decades. “I’d say the first 20 years of work on this team was about making the page pixel-perfect. So it looks exactly like it does in print,” says 29-year Adobe veteran Phil Ydens, a VP on the Adobe Document Cloud. “That seems crazy, but having worked on it, it’s a very deep area.”

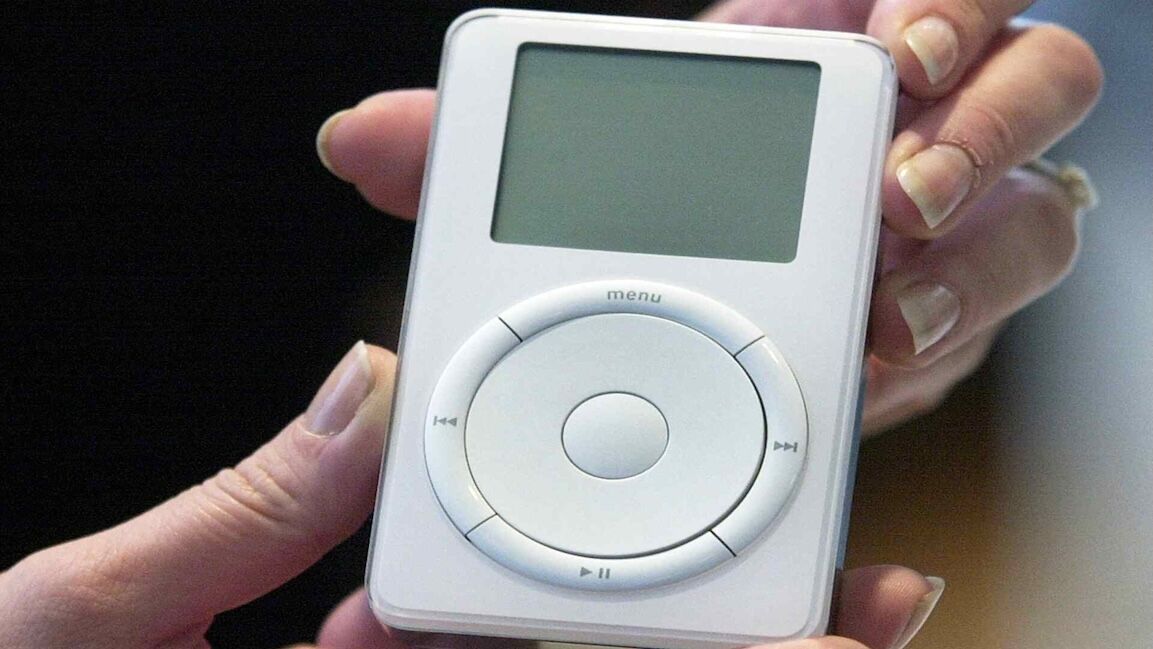

The PDF’s raison d’etre has always been as a document that would look the same on your screen as it would on a printed piece of paper. Originally that screen was your desktop, but as the smartphone took over, and Adobe tried displaying PDFs via an app, the medium’s paper-inspired shortcomings became apparent.

“It turned out that didn’t work well. It’s hard to take an 8.5”x11” page and fit it on the [small] screen,” says Ydens. “Even if it’s pixel perfect, you can’t read it. There was lots of pinching and zooming, turning it sideways. It was just awkward.”

For roughly the past five years, Adobe has been attempting to turn the PDF into something more analyzable and structured—something that could be intelligently rearranged or remixed for different screens. Working with their own AI systems, they taught systems to identify paragraphs and headers, charts, and other formats. In 2020, they released Liquid Mode in Acrobat to leverage some of this understanding to dynamically reformat PDFs to have a more readable UX on mobile.

Liquid Mode made PDFs a bit easier to read on phones, but you were still reading that giant PDF, albeit rearranged in smaller pieces. What the design team really dreamed of was something else: a feature as simple and straightforward as a PDF summary, if only they could somehow analyze the contents of PDFs well enough to offer it. Snowden suggests that the design team has proposed this feature every year for the past decade. But only with the recent breakthrough in LLMs did it become possible to marry Adobe’s understanding of every PDF’s unique structure with an AI that could actually make sense of the information inside of the document.

“I have to tell you, I always felt like if I could just get summaries [working] . . . it could make a big difference,” says Ydens of his 30 years spent developing PDFs. “We tried over and over again to get that technology to work, and it just wasn’t reliable enough—until LLMs arrived. What I thought was the Holy Grail just got accomplished when ChatGPT came out.”

WHAT DOES AI DO IN ACROBAT TODAY?

Acrobat is relaunching today with two specific (English-only) AI features that are tucked neatly into a bar that lives to the right of your PDF.

The first feature is AI summarization. Basically, AI breaks down a long PDF into a clean list of chapters. Tap into any chapter on the list, and it will expand to reveal a bulleted summary, while the main window whisks you to the page of the actual PDF where this information can be found. If you’ve used AI transcription platforms like Microsoft Teams or Otter.ai, you’ll recognize this summary trick right away. It’s just the sort of task that modern AIs are superb at.

The second feature is an AI assistant—and this feature will actually come to Adobe’s free-to-use Reader software, too. An option familiar to anyone who has used ChatGPT and other LLMs, you can ask any question about the specific PDF you’re in and get an answer. (The system also automatically suggests questions you can ask.) In my demo, this is really where the system shined. Whereas ChatGPT or Gemini are ready to answer fuzzy questions about the entire universe of information, Acrobat is laser-focused on this document living right in front of you. Its strength seems to be in its specificity.

That’s a powerful difference, even if a lot of the nuance is simply in presentation. In the example of an annual report, you could ask a question about revenue, and AI jumps through the document to assemble many disparate pieces of information (stored in charts, infographics, or text) and turns them into a bulleted list, complete with clean citations you can tap on to find the source of the claim. You could even do something more plebeian, like ask the system to consolidate a chart or data viz into text you could simply copy into an email. It’s just the sort of task that’s been frustratingly impossible with a PDF and will now be mindless.

Now, the technically inclined could do a lot of this on their own, piecing together various AI tools to use image recognition and LLMs in combination to do analysis. But Acrobat’s unique value proposition is just the sheer UX simplicity of using the AI.

“I think a lot of [our advantage] is user interface,” says Snowden. “The interesting thing about the side panel is it’s a paradigm people understand, and when it comes to new things, [we ask] what can we make familiar, because there’s a bunch of things that have to be different. Bringing UX familiarity to a new paradigm is a way to ease people into warm water.”

The other advantage may be Adobe’s stance on data privacy. From what the company has told me, Acrobat allows you to get the best parts of modern AI analysis without worrying about leaking your private data. Adobe claims it doesn’t use any of a user’s data or queries to train its systems, and its agreement with Microsoft makes the same promise. The company does collect metadata, like the number of headings or pages of the PDFs you work on, but says individuals can opt out and enterprise data is never collected without consent. (By comparison, Google’s Gemini can keep your data for up to three years even after you delete it.)

THE FUTURE OF ACROBAT

Acrobat makes for an impressive demo. But truth be told, I open PDFs at least once a week, and yet, I have no idea of the last time I loaded Acrobat (or even its web app or Chrome extension) to do the job. PDFs themselves are an open source standard now, so thankfully, you don’t need to use Adobe’s tools to read them. While Adobe says 500 million people use Acrobat every month (and 400 billion PDFs are opened in Acrobat over that time), I still suspect large numbers of people who encounter PDFs day-to-day aren’t actually opening an Adobe product every time they do so. That’s why Adobe’s decision to also add its AI assistant to Reader—Adobe’s free PDF-reading software—seems like an important, complementary play to get the masses using Adobe AI tools. (I didn’t get a preview to see how this feature will appear in Reader.)

That said, Adobe’s broader AI vision for Acrobat could make it an enticing platform to try anew. The company shared pieces of the product roadmap that will be hard to resist. Soon you will be able to pull several PDFs into Acrobat to analyze them together, doing deep research on a topic, ostensibly building your own little custom AI workspace for a project just by loading a couple of documents. Acrobat will also integrate with the larger Adobe Creative Cloud through products like Adobe Express, so you can easily take information from an uneditable PDF into a generative slide deck. I picture something like Canva for high end pro users.

But most of all, Acrobat’s AI seems like a bridge for Adobe, a company best known for media creation tools, to step into the sort of enterprise knowledge work Office 365 thrives upon. It could be a conduit to take a company’s pile of PDFs, an untapped repository of corporate data, and link that directly to any sort of media creation.

“I don’t know how many PDFs there are in the world. It’s astronomical. For organizations there could be dozens of PDFs associated with a project,” says Snowden. “To think about multi-document workflows, and making [them] digestible and actionable . . . that starts hinting at the true power.”