- | 8:00 am

We’re about to glimpse life on the other side of algorithms

For the past decade, social media companies have used algorithms to puppeteer our digital lives. That was always the wrong idea. Now the government is giving us the chance to opt out. Will we take it?

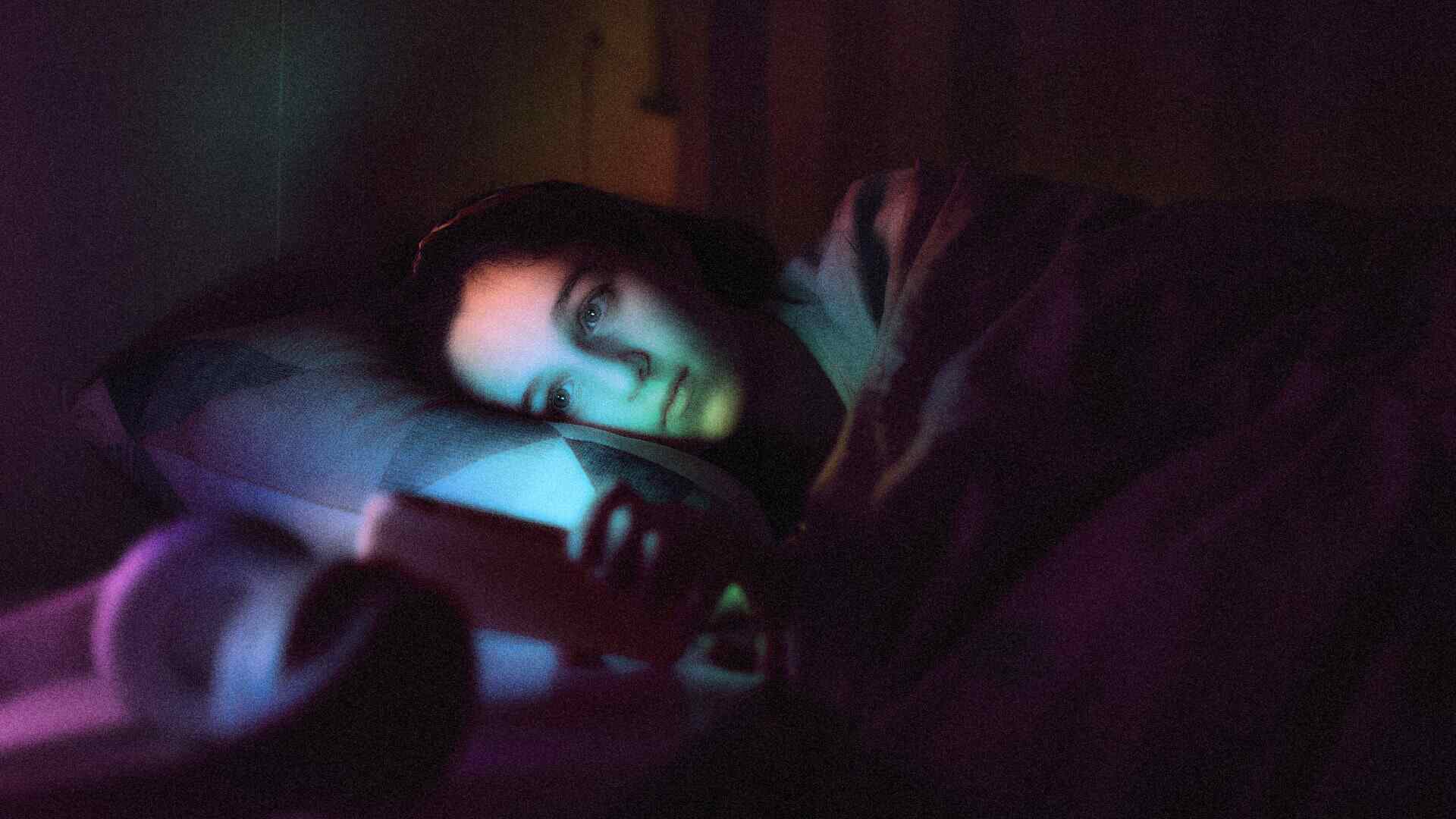

I study my TikTok account like a leech behind glass.

I can see it reaching from behind the screen to hook me—videos of big drippy burgers, flawless skincare routines, unending celebrities on jumbotrons, cleavage. I’ve liked and shared posts about calligraphy, art, and plant-based cooking; I’ve tried to teach the algorithm my tastes, but that’s never appeased the leech. So now I flick past its suggestions as quickly as possible—part in academic curiosity, and part in stubborn protest of being profiled and optimized by the algorithm.

Of course, maybe code lurking deep inside TikTok knows . . . this is just the way it can best engage someone like me.

In the 13 years since Facebook first introduced the algorithmic feed—breaking social media out of its reverse chronological timeline in a trend that would be copied by Twitter, Instagram, TikTok, and more—we’ve gone from largely seeing the things we’ve chosen to seeing the things that a few companies want us to. Our social feeds funnel each of us into a personalized multiverse of targeted capitalism. Our internet is essentially one big For You page.

At last, the policymakers in the U.S. are responding. New state legislation passed in New York State this week places a ban on what they call the “addictive feed” for minors. (A sister bill also cuts back on their ability to collect data on minors.) With backing by New York governor Kathy Hochul, it’s the first major bill to pass addressing the design of social media in the U.S., and California could follow suit later this year. In fact, I’m told the attorneys general from California and New York worked closely on this new legislation—their bills are nearly identical.

As a result, we could be approaching a post-algorithm era—within the social media industry, at least. That’s because legislators are targeting not just technology or Big Tech, but the design of these digital platforms themselves to limit how they can affect people. If only we’re bold (and disciplined) enough to embrace it.

TAKING ON BIG ALGORITHM

The scale at which algorithms affect us is almost too large to fathom. They are not just useful, but even crucial, in modern life. Algorithms—which are really just complex math equations—live in pacemakers and insulin pumps to keep us healthy; they optimize stoplights, public transit routes, and even our power grid to serve critical infrastructure.

Then again, they are also the core mechanism underpinning the attention economy, the primary business model of the internet. They are implemented, not with the goal to make us happy or healthy, but to keep us engaged and maybe sell us something.

More than a decade into their use, it’s certain that social media algorithms are a failed experiment for society. While they’ve made the Metas of the world impossibly wealthy, that wealth has been directly extracted from human well-being.

Teens in particular are experiencing increasing rates of depression and anxiety. As suicide rates soar in parallel to social media platforms, science is drawing more links between social media and declining mental health. For instance, a 2022 study published in Nature tracked 17,409 people between the ages of 10 and 21 for two-year periods, and it found that the more social media someone used during these sensitive life chapters, the greater the decrease in their life satisfaction. And this isn’t even taking into account that within just a few years, we’ve witnessed the obliteration of discernible truth.

At the same time, social media companies know there’s a lot to lose in disabling an algorithm and returning to the reverse chronological timeline: Namely, your attention. In a 2020 study funded by Meta, 7,200 US adults on Facebook and 8,800 on Instagram had their algorithm deactivated and replaced with a classic timeline. Finding themselves more bored on the services, they flocked to competitors like YouTube and TikTok.

Now, regulating the detrimental effects of social media could play out something like tobacco or alcohol regulations. Knowing it’s bad for everyone, regulators are attempting to curb consumption for those it can cause the most early and lasting damage. At least, that’s the approach of the Safe for Kids Act that just passed in New York. It states that companies cannot serve an “addictive feed” to anyone 18 and under, and a nearly identical act—using the same “addictive feed” framework—could be passed later this year in California.

“We states are frustrated that the federal government has not acted and created that level playing field across the country,” says California Senator Nancy Skinner. “There is existing federal law that ties our hands. For instance, I can’t touch content, so we have to look at what else we can touch for our kids. That’s where issues around addictive feeds, push notifications—things we have the ability to control.”

CHALLENGING THE VERY UX OF THE INTERNET

The definition of “addictive feed” is particularly interesting and wide-ranging—and it’s described similarly between New York, seen here, and California:

“‘Addictive feed’ shall mean a website, online service, online application, or mobile application, or a portion thereof, in which multiple pieces of media generated or shared by users of a website, online service, online application, or mobile application, either concurrently or sequentially, are recommended, selected, or prioritized for display to a user based, in whole or in part, on information associated with the user or the user’s device.”

That broad definition doesn’t dictate the use of technology. It doesn’t use the word “algorithm” or “AI.” No, it actually goes further; it bans a very particular UI/UX that has taken over the online condition—one that shuffles and reshuffles itself uniquely for each user. That broad approach was intentional in hopes of future-proofing the concept.

“In designing the bill, we . . . tried to use terminals that were broader so we weren’t in a situation so narrowly defined that within 6 weeks [it was obsolete],” says Senator Skinner.

The New York bill restricts the dynamic feed as we know it, one that provides an endless thumbprint pile of content to everyone. It seems to address social media platforms like Facebook, Instagram, Snapchat, and TikTok for sure. But the description seems broad enough to impact companies outside “social networks,” like the algorithmic feeds on YouTube, quasi-social-media companies like Pinterest, and perhaps even retailers like Amazon. For now, it’s all a little murky, because in New York State and California, the exact companies affected by this regulation will be specified by the attorney general.

At that point, companies will have 180 days to comply in New York State. California won’t enforce the bill until January 2027.

PROGRESS BY DESIGN

New York’s bill is an important precedent toward progress. And if California’s passes later this year, two of the four most populated states in the U.S. will have introduced restrictions on algorithmic feeds. (Meanwhile, Arkansas attempted to introduce such a bill in 2023 before being blocked by doesn’t-sound-evil-at-all social media trade organization “NetChoice.” Florida has passed a bill banning social media accounts for children under 14 and requiring parental consent for 14- and 15-year-olds.)

It is worth noting what the New York bill does not do. It doesn’t outright ban algorithmic feeds. There are zero new protections for adults, and minors will still be able to use “addictive feeds” with verified permission from a parent. So, any adult who wants to let their child be subjected to an algorithm can (and no doubt, thorny terms and conditions could trick many parents into opting in). That’s a bit different from regulation we see on controlled substances like alcohol.

The bill restricts but doesn’t ban another insidious tool: push notifications. (In New York, push notifications cannot be sent between the hours of midnight and 6 a.m. without parental consent. In California, the bill would also include school hours.)

When I asked Senator Skinner why they didn’t outright ban push notifications as part of California’s bill, she says it was to be unobjectionably reasonable. “I think whether it’s me or another legislator, when we’re crafting a bill we’re trying to reach a balance,” she says. “It would be hard for most people to argue that somehow limiting that push motivation from midnight to 6 a.m. is somehow an overreach.”

The bill also doesn’t seem to stop external bot accounts that could be posting onto feeds via algorithms of their own. It’s also fuzzy to determine how the bill might address the rising field of generative AI. While not a feed, per se, the AI companion has quickly become a standard across social media apps. It uses models that are just as opaque and personalizable as the feed, albeit through a flow of conversation. These virtual personas could become closer to minors to self-serving and nefarious ends, just like the feed has.

So could these new bills address manipulative AI buddies, too? One drafter hopes the answer is “yes.”

“It’s hard to jump ahead or get ahead of technology because the development of it is fast and furious,” admits Senator Skinner. “But I think while it’s not explicit to AI, if the AI used by any of these platforms was used in a way that could be defined as an ‘addictive feed,’ then [our bill] would affect AI.”