- | 9:00 am

AI-powered businesses in the Middle East need a new CEO. Yes, Chief Ethics Officer

With rapid AI adoption in the region and the rising risks associated with it, a Chief Ethics Officer becomes imperative for enterprises, experts say.

Two years ago, when Google fired Timnit Gebru — its top AI ethics researcher who was examining the downsides of technology integral to Google’s search products — it triggered waves of protest.

Last month, Twitter’s Ethical AI team was caught by a purge just after Elon Musk argued that its algorithm should be more transparent — something the team was working on until it was fired.

It’s exactly the kind of short-sighted action we’ve witnessed from AI-powered tech companies that claim to be responsible stewards of AI.

Over the past few years, businesses in the Middle East, in the wake of the Industry 4.0 revolution, are shifting towards AI and advanced technologies. But people can be directly harmed by the unregulated and mass deployment of AI. For instance, in the financial sector, algorithms determine who gets loans and who doesn’t.

In the data-driven digital age, when customers are more knowledgeable and have higher expectations and more choices than ever, businesses’ fortunes rest on trust and transparency.

According to research, executives worldwide recognize the increasing importance of data responsibility, especially concerning ethics.

GOVERN ETHICAL ISSUES OF NEW TECHNOLOGIES

As AI becomes more central, businesses in the region will need to consider how to address and govern the ethical issues these powerful new technologies will inevitably generate. Trust, privacy, and transparency concerns can become legal or reputational issues.

And so now the question increasingly being asked is: Are businesses in the Middle East adopting a responsible AI standard? Are they taking AI ethics concerns seriously?

“Remember, machines do not have and never will have ethics. And machines and AI will replace us in most things we do. It will start with repetitive and mechanical tasks, and then will move on to the more advanced tasks that require analytical or emotional intelligence,” says Manuel Abat, Partner and Head of Digital and Implementation at Oliver Wyman India, Middle East and Africa.

“We must make sure they replace us in a way that isn’t used against a particular set of people or their principles or morals, even if by accident,” he adds.

But not many AI-powered tech companies in the region have AI ethics boards, despite widespread evidence that algorithms, facial recognition, machine learning, and other automated systems replicate and amplify biases and discriminatory practices.

According to Timothy Wood, Partner and Head of Cybersecurity at KPMG Lower Gulf, companies will eventually put in place a diversified AI ethics committee to address and mitigate the ethical risks of AI products. “As companies and organizations in the region are still at the early stages of their AI maturity, AI ethics is acknowledged as a key aspect to AI applications but still not sufficiently the center of attention.”

Agreeing with Wood, Christian Stechel, Principal with Strategy& Middle East, says, “While many Middle Eastern companies are involved with AI systems or use cases in one form or the other, the majority of them remain at the planning or experimentation stages – only a few are in the advanced stages of having fully functional AI-based use cases.”

“Yet even at these early stages, we see focus given by most enterprises on the importance of having the right ethical principles and governance mechanisms in place. Nevertheless, these remain more directional and ‘soft’ guidelines rather than being translated into formal policies or procedures around AI ethics,” adds Stechel.

IMPLEMENTING BLANKET AI REGULATIONS

There’s an ongoing debate about how AI should be regulated and if new legal systems need to be developed to address any risks that may arise from defects in the AI system. However, most regulators have thus far avoided implementing blanket AI regulations that cover all industries. Primarily for fear that such an approach may stifle innovation.

In the region, several jurisdictions have enacted specific legislation mandating large technology companies. For example, the UAE’s Ministry of AI and Smart Dubai. The UAE government has also created a regional ethics council, and Digital Dubai has ethical AI principles and a toolkit.

“While most GCC governments have defined AI strategies (National Strategy for Data and AI in KSA), there are no AI-specific regulations yet at the national level. Similarly, at the sector level, some have started working on AI guidelines (The Saudi Food and Drug Authority guidance on AI in the context of medical devices in KSA), but have not reached the level of regulations yet,” says Stechel.

“To enable and stimulate the AI ecosystem, such national and sector-specific regulations are essential to stimulate investments and encourage international players to fund AI projects and initiatives in the region.”

AI presents some immediate and ethical challenges which need to be governed by regulation, Wood says. “As we enter the next phase of AI- fueled products and services, there is a need to recognize the complex implications of AI and further codify those ethical standards.”

According to Abat, all countries in the region should have guiding principles of what they expect from different companies when it comes to AI regulation to safeguard the unsafe uses of AI.

“There are two elements here, though – there must be regulation and the tools to comply. Otherwise, the gap between companies that have resources to comply with the regulation and those that don’t might increase,” says Abat.

“But you can’t copy and paste ethical frameworks across countries and organizations. It’s not like every company in the world could share the same mission statement or every country the same constitution. Each part of the world, each company, must have its own set of considerations,” he adds.

Even as global attention turns to the purpose and impact of AI, many experts worry that ethical behaviors and outcomes are hard to define, implement and enforce. “As organizations are still experimenting with AI applications to maximize their profits, the pursuit for an ethical AI design will develop, and progress will come as different fields evolve,” says Wood. “This will further be shaped by the market and legal systems that will drive out the inefficient AI systems. Some sectors will be quicker to achieve ethical AI rules and have standards in place. This will depend on the nature of their businesses and ethical implications and will pave the way for the rest of the market.”

C-SUITE ROLE MONITORING AI ACCURACY

Building trust in AI will require a significant effort to instill a sense of morality and operate in full transparency.

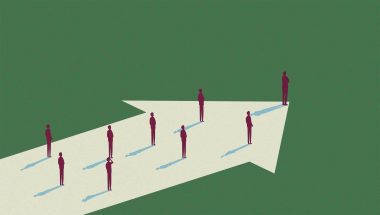

So what is the way forward? To truly have checks and balances, businesses should have a C-suite role in setting the agendas of AI, monitoring and assessing the accuracy, bias, and privacy, and building technology that genuinely benefits people.

So, is it time for AI-powered tech companies to hire a Chief Ethics Officer?

With the region’s rapid increase of AI adoption and investments across industries, along with the growing maturity of regulatory frameworks and the rising enterprise risks associated with AI, a Chief Ethics Officer is imperative for enterprises, says Stechel. “A Chief Ethics Officer not only ensures that data and AI solutions development, use, and oversight is ethical, but also follows fundamental principles like fairness, explainability, privacy, safety, and security.”

Chief Ethics Officer is key to minimizing enterprise risks, such as reputational and financial losses that derive from an improper adoption of AI solutions, he adds.

Furthermore, best practices, like the definition of ethical codes of conduct, the set-up of an ethical board, or simply the provisioning of ethical training, are typically driven by a Chief Ethics Officer.

“With the increased adoption of AI, there will be an undeniable need for regulation in the area, such as the creation of a think tank to develop frameworks analyzing the complexity of AI and its ethical implications, along with general guidelines for organizations. The more mature those AI-driven organizations become, the higher the need for a Chief Ethics Officer,” says Wood.

Experts also highlight the importance of AI-powered businesses having an ethical manifesto to increase awareness of the fundamental principles concerning ethical development, use and oversight of data and AI solutions, and to provide guidelines on how to incorporate and apply them in day-to-day activities.

“With the proliferation of AI solutions across organizations, a manifesto that aligns all employees on an enterprise’s ethical and moral priorities becomes more of an imperative than an option,” says Stechel.

Additionally, a Chief Ethics Officer and an AI manifesto help ensure the right returns on an enterprise’s AI investments. “By having the right checks and balances and regulatory compliance in place upfront, enterprises will minimize the risks of fines, penalties, legal liabilities, and bad publicity,” he adds.

According to Abat, the first steps should be to implement strong principles and a diverse AI ethics committee and bring in the Chief Ethics Officer. “Businesses shouldn’t start with putting a Chief Ethics Officer in and then having everything cascade down from one person; you should build from the bottom up.”

“It’s not like the CFO is the only person in a business who thinks about money. It’s not a one-person solution: having a multidisciplinary team is incredibly important,” adds Abat, who is currently pursuing a Ph.D. in ethics, AI, and banking.

Multiple perspectives, say experts, need to be considered when defining, building, and running AI.

“We should start prioritizing ethical considerations now – let’s not only do so when we wake up and realize that AI has taken over. Instead of fixing the system later, let’s build it properly now,” says Abat.