- | 8:00 am

Anthropic reveals new state-of-the-art AI with new Claude 3.5 Sonnet

The company says the new Sonnet is smarter and faster than anything else out there, and no more expensive than its predecessor.

Welcome to AI Decoded, Fast Company’s weekly newsletter that breaks down the most important news in the world of AI. You can sign up to receive this newsletter every week here.

ANTHROPIC UPS THE ANTE WITH A NEW STATE-OF-THE-ART LLM, CLAUDE 3.5

Three months after releasing its Claude 3 family of AI models in March, Anthropic is upping the ante in the AI arms race again with Claude 3.5 Sonnet. The new model sets industry-performance records across a number of commonly used reading, coding, math, and vision benchmark tests, Anthropic says. It’s three-and-a-half-times as fast as Anthropic’s current high-end model, Claude 3 Opus, and access will be priced the same as its mid-tier predecessor, Claude 3 Sonnet.

“This now represents the best and most intelligent model in the industry,” Anthropic cofounder and president Daniela Amodei tells Fast Company. “It has surpassed GPT-4o, all of the Gemini models, and has surpassed Claude Opus, which is our top-tier model.”

Amodei says the new model’s improved writing skill has always been a strength of Claude models, and 3.5 Sonnet shows its new ability at writing with a natural and relatable tone, she says.

Users can try Claude 3.5 Sonnet for free at Claude.ai and via the Claude iOS app, but with usage limits. Premium subscribers with the Claude Pro or Team plans will have five times as much usage capabilities with their access.

Anthropic also previewed on Thursday a new feature called “Artifacts,” which lets enterprise users collaborate with each other and Claude in real time. It’s a dynamic workspace where users can edit and build on Claude’s generated content, such as code or documents, in real time. Artifacts a shift into offering secure collaborative environments with centralized knowledge management for enterprise teams, similar to OpenAI’s ChatGPT Enterprise offering.

WHY DID APPLE DECIDE TO OUTSOURCE ITS AI CHATBOT? RISK, LIKELY.

Apple was, in a number of ways, very smart about the way it rolled out its new generative AI features for iPhones, Macs, and iPads last week. Many of the new AI features are powered by Apple’s own AI models—some of them running on the device itself and others running within a secure cloud. None of this was surprising, given what we’ve reported about Apple’s work in the past. What was surprising was the company’s decision to partner with OpenAI to supply its ChatGPT chatbot for Apple users.

Apple has for years been working on machine learning. The company hired Google’s head of AI, John Giannandrea, back in 2018 to lead its AI strategy. Given that, why didn’t Apple develop its own large language model like its peers did?

It’s true that developing a state-of-the-art LLM takes a lot of research talent, a lot of computing power, and time. Apple had access to all that. The reason it didn’t push hard toward an LLM-powered chatbot may be the same reason Google hesitated—the risk that the chatbot would leak a user’s private information or a corporation’s business secrets, or spread disinformation or slander someone or spew dangerous information (plans for a bioweapon, perhaps). When OpenAI went public with ChatGPT in late 2022, some big tech companies’ concerns over those risks took a back seat as big tech companies began to fear falling behind in a potentially transformative new technology (and being punished for it by Wall Street). But not Apple.

The AI features that Apple is running on its devices and servicers—things like photo corrections, emoji creation, or text summarization—do a tightly controlled list of low-risk functions. ChatGPT and chatbots like it are far more open-ended, can be used for a wide set of tasks, and are generally more unpredictable (risky) in their responses. (Both defamation cases and copyright infringement cases have been brought against OpenAI for ChatGPT’s output.) “I do think that is a reason,” says Creative Strategies analyst Ben Bajarin. “And it’s also why they (Apple) may not focus on this (the ChatGPT feature) as much and will focus more on the fine-tuned features that come across as more valuable and more useful.”

Apple denies that risk mitigation is behind its decision to stick with a third-party chatbot. The company said the decision was more about letting users access an AI chatbot without having to leave the context they are working in within the OS.

“For broader queries, outsourcing tasks to OpenAI, with a disclosure of transferring data to a third party, will maintain Apple’s trusted brand integrity,” says Blitzscaling Ventures investor Jeremiah Owyang. But it could help Apple in other ways, too. “The strategic advantage for Apple is that it can analyze the queries sent to OpenAI, learn from them, and improve its own models,” Owyang says.

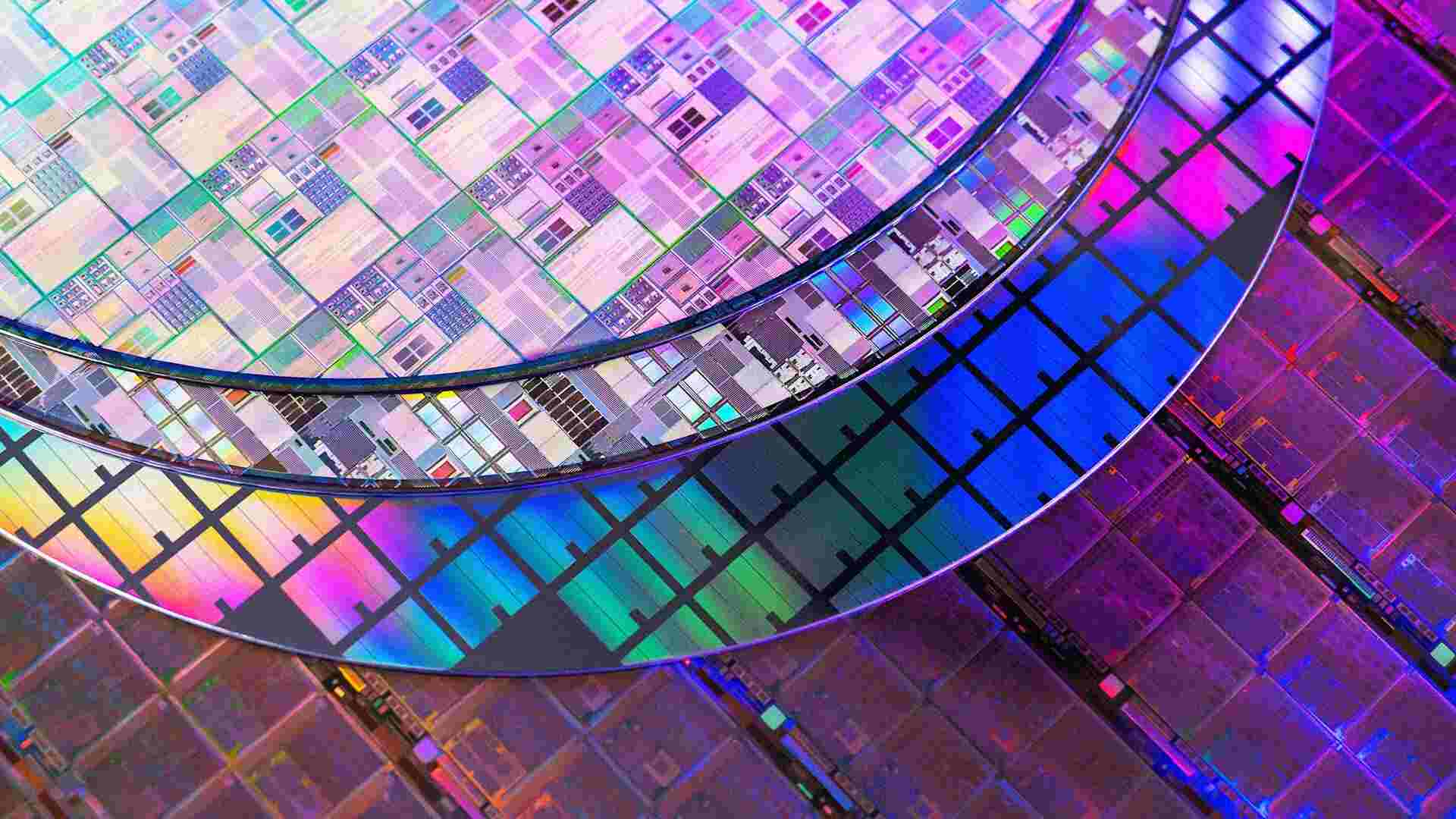

AI CHIPMAKER NVIDIA IS NOW THE MOST VALUABLE COMPANY IN THE WORLD

Buoyed by AI hype and record chip sales, Nvidia on Tuesday passed Microsoft to become the most valuable company in the world. This came just a few days after the chipmakers blew by Apple to take the second-place spot.

Buoyed by the strength of its GPU sales, Nvidia stock popped 3.5% Tuesday to $135.58, sending its market cap to $3.335 trillion, Reuters reports. The company’s stock has tripled in value during 2024. The company accounted for 32% of the S&P 500’s return over the first five months of 2024—that’s five times more than either Microsoft or Meta, according to data from the alternative investment data firm AltIndex.com. Overall, the S&P showed an 11.3% return on investment during the first five months of the year, the firm says. By itself, Nvidia brought 3.65% of that.

Even with the AI hype factored in, Nvidia has managed to surprise the markets with better-than-expected results in each of the past two quarters. After its February report, Nvidia’s stock value increased by $276 billion in a single day. A day after its latest earnings report May 22, Nvidia’s stock value jumped by $217 billion and has continued rising ever since.