- | 9:00 am

Are ChatGPT-powered deepfakes a bigger threat to Middle East businesses?

Concerns are mounting that when generative AI is combined, imitative tools can turbocharge the work of scammers, disrupt businesses and erode an already fragile social trust.

In 2020, a bank manager in the UAE was scammed into transferring $35 million when criminals simulated the voice of its director using a fake AI-generated voice. This is one of a growing number of examples of how fraudsters can make a fortune off of deepfakes or even go as far as to destroy businesses and brands.

Audio deepfakes threats have increased, not just in the Middle East but worldwide, while the predominance of ransomware attacks has yet to let up.

Even worse, with chatbots like ChatGPT generating realistic scripts with adaptive real-time responses – deepfakes phishing is evolving again and being called the most dangerous form of cybercrime.

“ChatGPT is an enabler for cybercriminals to design more efficient and effective tactics,” says Dmitry Anikin, Senior Data Scientist at Kaspersky. “This does not mean that a cybercriminal can ask ChatGPT to draft malware, and it will deliver. But, they can use ChatGPT to generate deepfake text.”

ChatGPT might become a cybercriminal’s new weapon of choice. By combining AI with voice generation, a deepfake goes from a static recording to a live, lifelike avatar that can convincingly have a phone conversation. In real time, it can mimic accents, inflections, and other vocal traits that can easily trick anyone.

Concerns are mounting that when generative AI is combined, imitative tools can turbocharge the work of scammers, disrupt businesses and erode an already fragile social trust.

DAMAGING TO BUSINESSES

According to cybercrime experts, audio AI is learning quickly, and GPT-like tools are making it faster and cheaper for criminals to build more fluent and convincing deepfakes.

Deepfake phishing could be far more damaging to businesses, putting them at a greater risk of financial fraud and potentially facing severe penalties.

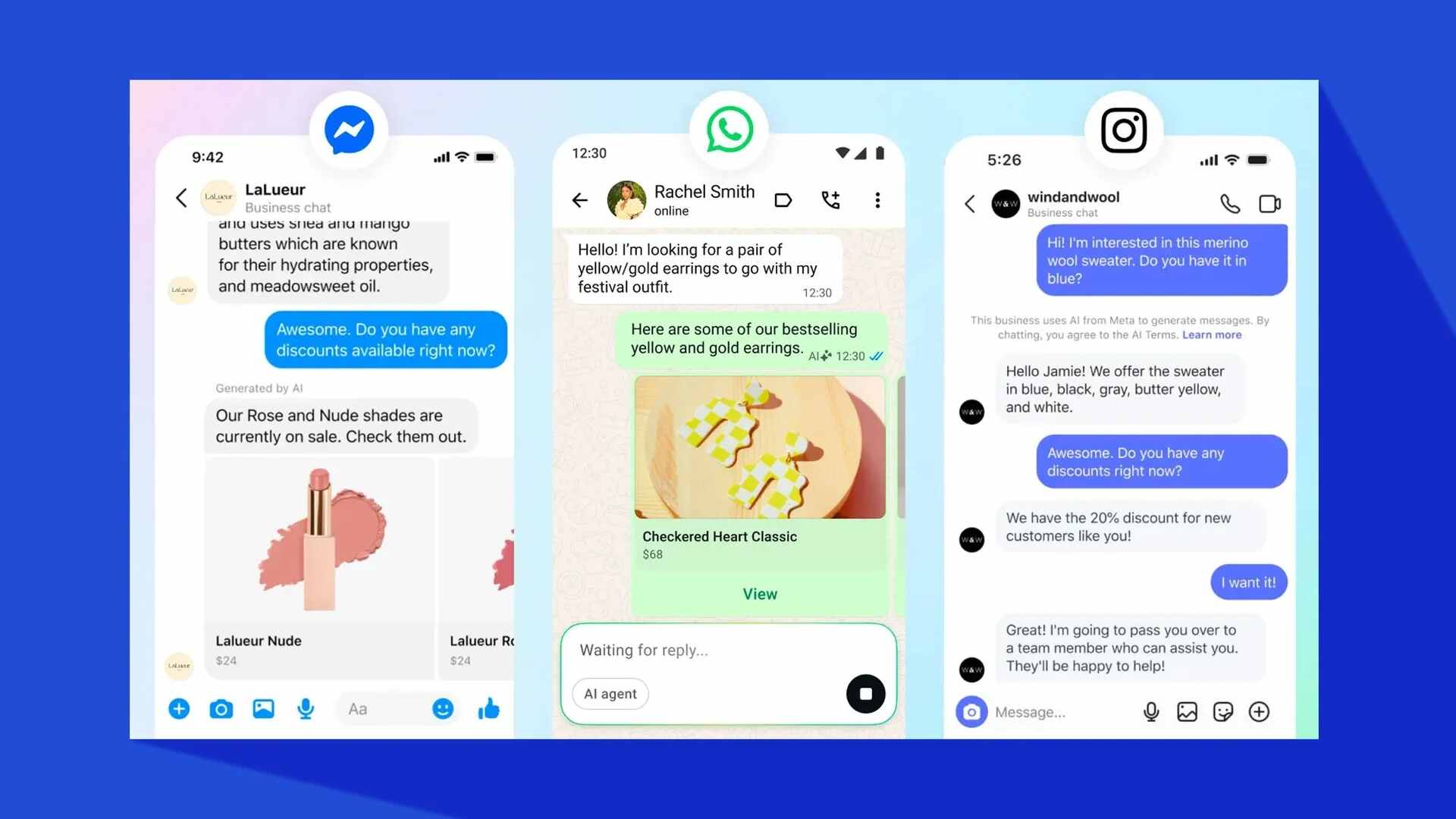

Businesses, processes, and communication are performed online, with employees communicating, collaborating, and exchanging information digitally — often not securely. Video and audio requests from known superiors and co-workers are a major vulnerability that can have far-reaching implications for the business and the employee.

“Deepfake audio technology is a big threat to businesses that relies on communications with foreign nationals and other geolocations,” says Morey Haber, Chief Security Officer, BeyondTrust. “ChatGPT can adapt and learn, based on samples, to make deepfake audio more realistic and include mannerisms, in real-time, just like the real person.”

Since deepfake technology is already widely available, anyone with malicious intent can synthesize speech and video and execute a sophisticated phishing attack.

“Systems like ChatGPT have already proven their abilities to create realistic real-time scripts. Combining all these technologies means that in the digital world, it is becoming extremely difficult to identify the authentic person from a digital clone,” says Joseph Carson, Chief Security Scientist & Advisory CISO, Delinea.

“[If you] leverage the technology against targeted individuals, the result could be a potentially massive attack vector to conduct improper financial transactions or initiate unwarranted panic,” adds Haber.

Although social engineering scammers have been impersonating people for a long time, according to experts, cybercriminals now have emerging technologies.

“Imagine receiving a realistic call from your CEO asking to transfer funds to a supplier within an hour. In a world primed to function on the concept of “seeing is believing” [or hearing is believing], the consequences of deepfake technology can have devastating consequences,” adds Anikin.

RAPIDLY EVOLVING

Cloning technology is leading to a flood of scams in the Middle East, although crafting a compelling high-quality deepfake is not the easiest thing to do – it requires artistic and technical skills, powerful hardware, and a fairly hefty sample of the target voice.

“There are reported cases of people receiving calls from whom they recognize as a friend or a relative by their voice—and often also their caller-IDs—asking for money because they are in deep trouble,” says Carson.

“Deepfake technology is rapidly evolving as we expose our ‘digital DNA’ on the internet by sharing photos, videos, images, and audio, which give out a lot of information about ourselves, with anyone. This means that cybercriminals have everything they need to create deepfakes.”

Haber agrees, saying, “Deepfake cloning technology is now mainstream. It will take time to develop fingerprint and authentication technology to identify deepfakes and impersonation technology.”

With all that said, cybersecurity companies are working hard to be able to detect video and audio deepfakes and limit the harm they cause. And then it’s up to us and our skills of discernment and human intelligence, though it’s unclear how long we can trust those.

So, what can you do?

CAN BUSINESSES LIMIT THE HARM?

Today, the detection of deepfake technology is quite limited. There are limited automation tools that can process content and draw a conclusion for authenticity. Cyber experts say businesses must inspect the content for distortion, inconsistencies, and inappropriate responses that might allude to deepfake technology.

It all starts by having good cybersecurity procedures and practices in place – not only in the form of antivirus software but also in the form of developed IT skills and cybersecurity awareness of the employees.

All agree that having protocols like “trust but verify” is the best defense.

The key to mitigating deepfake risks is to nurture and improve employees’ cybersecurity instincts and strengthen the organization’s overall cybersecurity culture.

“Strengthening the ‘human firewall’ includes ensuring employees understand what deepfakes are, how they work, and the challenges these can pose. A skeptical attitude to voicemail and videos will not guarantee people will never be deceived, but it can help avoid many of the most common traps,” says Anikin.

Haber says the concept of ‘trust but verify’ includes contacting the source to verify the message, exchanging a passcode or pin for identity confidence, and exchanging communications via a trusted medium (verified email accounts or phone numbers) to establish trust.”

According to Carson, Zero Trust will take an entirely new form in this new era of threats — it’s crucial to verify everything, even when it appears authentic continuously. “We live in a world where the movies are becoming a reality. Technologies such as the face swap and voice replicator in movies such as Mission Impossible are now a reality, so we must no longer trust that the person we are communicating with online is authentic.”

By 2023, 20% of all account takeover attacks will leverage deepfake technology. It’s time businesses recognize this threat and raise employee awareness because synthetic media is here to stay and will become more realistic and widespread.