- | 8:00 am

ChatGPT can’t do actual work. That means it won’t be replacing anyone anytime soon

Can we just tone down the hype and explore how it can actually enhance our lives?

Now that ChatGPT has been out for a couple of months, it’s time to tone down the hype a bit. Rather than sound the never-ending alarm about Our Robot Overlords, let’s explore how it can actually enhance our lives.

In software development, two key features are driving these conversations:

- ChatGPT’s ability to generate properly formatted, relevant code (in multiple languages), based on simple natural language requests.

- The ability to tweak, refine, and enhance these requests by improving prompts over the course of a session.

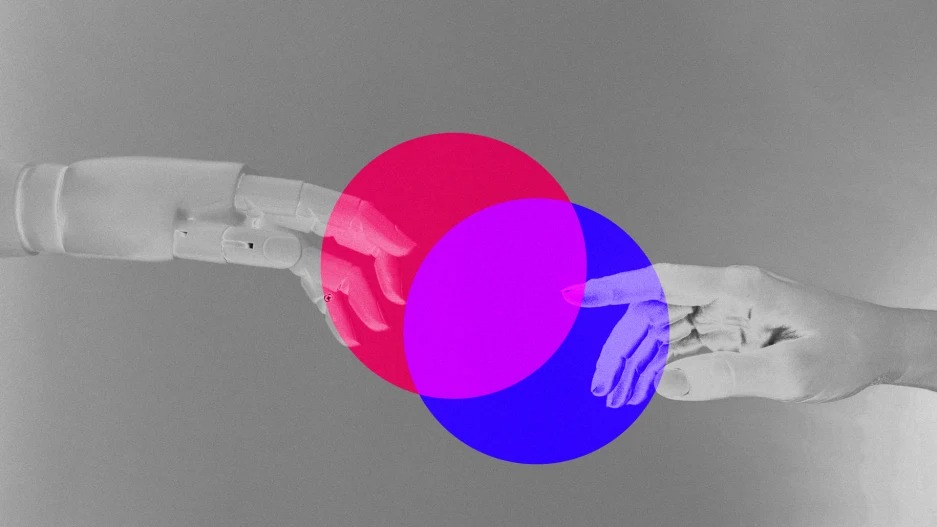

It’s been fashionable to talk about whether ChatGPT is going to replace our work or enhance it. Assuming the answer will be somewhere in the middle, let’s talk instead about what it’s good at, and what its limitations are.

IT’S A ROOMBA, NOT A REPLACEMENT

People generally think that developers sit and write code all day. The truth is, developers spend about 20% to 30% of their time actually writing code. The rest of their time is in maintenance, testing, debugging, configuration, and meetings.

If only ChatGPT could attend our meetings for us.

Most code is utilitarian, rather than significant. A few lines of critical business logic are surrounded by cascades of repetitive “support” code: function declarations, loop logic, prepping data, unit tests, and more.

Much of the code we write is the equivalent of sweeping the floor at a dance studio. You have to do it, but wouldn’t you rather be dancing?

This is where ChatGPT shines. It offsets the tedium, leaving you to focus on getting your features to market faster. It can generate support and config code, create entire functions, write unit tests, and even synthesize documentation. It will be well-formatted, and you can copy it directly from the web interface into your codebase. This could be a great way to bootstrap a new project, or give you a starting point from ground zero.

This saves time, but it also saves brain power. Much ink has been spilled about developer “flow.” You only have so much flow in a given day, so anything that makes it easier will make the rest of your day significantly more valuable to the business.

You dance. Let the Roomba sweep the floor.

ACCURATE CODE IS JUST THE BEGINNING

As great as that sounds, that’s probably the extent of what you can expect right now. ChatGPT simply can’t replace the most human parts of a developer’s job, any more than a Roomba can dance the mambo. Accurate code is the starting point for creating software, not the end.

ChatGPT can’t design a system, or build an architecture, or create the ecosystem of supporting infrastructure you need for any complex system. It can potentially help you think through some ideas, but it can’t innovate.

Most importantly, you still need to know the difference between a good answer and a bad answer.

ChatGPT can do just as masterful a job at generating bad code as good code, if the person prompting it doesn’t know the difference. You can ask it to use the wrong tool for the job, and it will give you a beautifully wrong answer.

Sauce Labs recently did an analysis that generated ChatGPT tests in a popular test framework. ChatGPT did a great job, but mainly as a result of good prompting. The generated code was both readable and semantically good—it was code anyone should be proud to use as a teaching tool.

After publishing the article, we deliberately asked the tool to give us tests in the same framework, to solve a problem that was wholly unsuitable. Dutiful as ever, ChatGPT generated bloated, overly complex code that was well-formed and functional, but which should never be allowed into a professional codebase. This is not a comment on ChatGPT, but a warning that its output is only as good as its prompts, and that you still need to know the difference.

Developers should be able to summon a tool to write unit tests for them, because unit tests tend to be deterministic and predictable. Management should want developers to generate these automatically so they can spend more time on features that can go to market faster.

ChatGPT is the grease for the gears, not the gears themselves.

RISKS YOU SHOULDN’T IGNORE

It’s not always right. As Harry McCracken points out, there are a lot of inaccuracies in a given ChatGPT answer right now. The company’s FAQ is up front about it.

ChatGPT as a crutch. Relying too much on a tool like ChatGPT could lead to critical knowledge gaps when it comes to debugging, analyzing failures, and innovating solutions to the problems you face every day.

Monetization. OpenAI is not charging for the service right now. But whether through subscription, ad revenue, or enterprise contracts, there’s no way they won’t eventually charge for it. Microsoft will incorporate parts of it into Bing, which has caused Google to take notice. Now that the Browser Wars are pretty much over, get ready for the Generative Wars.

Something else will come along. New AI tools are being announced all the time. If we build an ecosystem of tools around something this early, we run the risk of “early adopter syndrome”—being obsolete before we’re even finished.

AI’S TODDLER PHASE

It’s too late to say that generative AI is in its infancy. Let’s call it the toddler phase—probably a little before the terrible twos. Right now ChatGPT is fuzzy, harmless, and helpful, but it can’t be counted on to do actual work. Its answers are inextricable from the inaccuracies and biases of the humans who created it.

That said, if we use it as intended, ChatGPT and its successors should become useful daily tools for developers. After some time, you’ll realize that it’s been a while since you swept the floor and marvel at how much more dancing you’ve been able to do.