- | 9:00 am

How AI apps are moving beyond basic chatbots

Generative AI developers are using the technology for more than just basic chat—and sometimes leaving out the chat interface entirely.

Freeform chat-powered tools like ChatGPT and Midjourney have captured much of the attention paid to generative AI, but increasingly, the technology is being used behind the scenes to breathe new life into a variety of software applications.

“We’ve really been kind of witnessing the birth of one of the most pivotal technological innovations in a generation with generative AI,” says Amanda Silver, corporate vice president at Microsoft for product, design, user research, & engineering systems, developer division.

Microsoft points to a class of “intelligent apps” that use AI and machine learning, along with troves of data, to deliver highly tuned services and experiences to users. Some of those apps, like interactive interfaces designed to help navigate a corporate knowledge base or online marketplace’s inventory, use a chat interface focused on a particular topic or dataset. But others use language models like OpenAI’s GPT purely in the background to summarize text, provide real-time translations and notes of meetings, or generate content on demand.

One example is The StoryGraph, an app for tracking what you’re reading and finding new books that’s often compared to Goodreads. But unlike Goodreads, which is owned by Amazon, The StoryGraph is essentially run by a team of three, empowered by AI tools.

At one point early on, StoryGraph founder and CEO Nadia Odunayo was personally researching books to determine factors like mood and pacing used to make recommendations. That helped build the initial core of the site with a couple of thousand books, but it could never be enough to make recommendations based on any substantial fraction of the millions of books in circulation, says co-founder & Chief AI Officer Rob Frelow.

The company has since pivoted to automation. It’s using AI to make recommendations and generate quick spoiler-free synopses that many prefer to the publisher summaries found on the backs of books. Other AI models are ensuring book photos uploaded by users are actually pictures of books, and not porn, spam, or unrelated images someone selected by mistake.

“We’ve had users who have uploaded family photos by mistake, and they send us messages, saying I uploaded my family photo, please delete this,” says Frelow. “And then before they even send the email, they hit refresh and they send us an email back saying, ‘Oh, the image was already deleted.’”

Typically, Frelow writes code around the backend AI services, and Odunayo develops front-end code for the StoryGraph websites and apps. Artificial intelligence, including language models and other methods, takes care of much of the rest without the need for a large staff.

“We found a lot of uses that it’s not even like, in the past, we would have had to hire hundreds of people,” says Frelow. “This would have just been impossible.”

The company uses open source tools and self-hosted AI models rather than an external cloud service, which Frelow says vastly cuts costs. It also addresses users’ concerns about the security of the personal details of what they’ve been reading. Hosting code versus running it on third-party cloud systems is by now a familiar trade-off for all kinds of software, but it can be especially critical given the cost of using some AI models and the amount of potentially sensitive data needed to make AI systems work. As with other computing tasks, developers also need to decide when they need to use the most powerful versions, versus when a smaller or more specialized AI model can be just as effective, often at lower cost.

“The simple thing to do is just use GPT-4 which is massive and huge and [can be] completely unnecessary,” says Scott Hanselman, vice president of developer community at Microsoft. “It’s just like bringing a bazooka when you need a scalpel.”

Other considerations are essentially new to the world of AI-powered apps, like crafting effective prompts to language models and making sure they can’t be manipulated by users into going off the rails. “A year and a half ago, none of us really understood what a prompt was, or understood how it roughly worked, or what we could expect,” says Adam Kelly, principal group engineering manager at Microsoft.

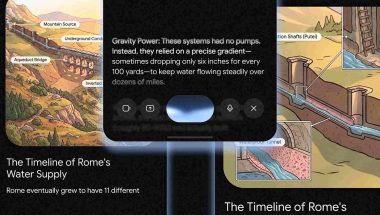

Kelly works on a project called Reading Coach, which uses AI to help users generate reading-level appropriate stories for children and others learning to read. The free tool, which grew out of a Microsoft hackathon, could have taken years to build without modern AI, Kelly says. Azure systems can help with tasks like making sure stories don’t include content inappropriate for young audiences, but the team still had to learn how to use large language models effectively.

One example came when the team was devising instructions for the AI and noticed that it sometimes wrote dry recaps at the end of stories rather than bringing them to a more engaging close. The issue turned out to be the use of language like the word “conclude,” which seemed to evoke in the AI the idea of an essay conclusion like a student might write. The team also learned to put certain instructions, like directions about the length of the story, in both the beginning and end of prompts for emphasis—something more akin to giving assignments to humans than programming traditional computers.

AI models also typically contain an element of randomness, so testing they behave properly isn’t as simple as testing other software, which can be expected to consistently give the same response to the same input. “You tell the computer, do one plus one, and it’s gonna give you two every time,” says Kelly. “But if you say, ‘hey, generate me a story,’ sometimes it can do weird things.”

That isn’t a showstopper for many projects, but it does require developers to think carefully about managing behavior, ensuring AI has access to update-to-date information, and potentially handing off tasks between large language model AI and other tools.

An India-based startup called UpEase, founded by students at the Manipal Institute of Technology, is testing an AI-powered chat “copilot” for university students and faculty. It grew out of student frustration finding information about accessing university resources during the pandemic, and has since grown to let students get the latest information about events like freshman orientations and professors access data about their classes.

“Students find it really difficult to navigate the first year or their freshman year on campus,” says CEO Karthik Prabhu. “So they ask questions like, ‘how can I access these resources?’”

Because the AI needs to have access to up-to-date information from databases of events and academic information—and mustn’t share confidential information about particular classes with the wrong person—the system can’t just be a standalone chatbot connected to the world only by prompts. It needs to use other software as a tool for tasks which require outside information or talents it doesn’t possess, like doing the math to aggregate class data in an easy-to-read table. That’s made possible in UpEase’s case by Microsoft’s Semantic Kernel, one of several utilities that connect AI and other code.

“When it comes to data-oriented queries, the LLM is used for natural language understanding only,” Prabhu says. “In other words, it understands what the query is asking, and then it runs to call the tools which are available to it.”

And in turn, developers are increasingly seeing LLMs as one tool in their toolbox for building software rather than necessarily the core feature or the defining user interface. Exactly what roles they’ll play in popular apps likely remains to be seen as core AI software, and software built around AI, continues to evolve.

“There’s developers that just want to make delightful experiences,” says Microsoft’s Hanselman. “We’re all slowly trying to work with each other to figure out what those experiences are going to be.”