- | 9:00 am

Is the letter calling for a pause on AI an impossible ask?

The open letter calls for a moratorium of at least six months on development of AI systems more powerful than GPT-4. But the genie’s already out of the bottle.

An open letter signed by Elon Musk, Steve Wozniak, Andrew Yang, and many others asks that companies like OpenAI (which Musk cofounded) stop releasing new AI models until the risks can be better understood and better managed. But the AI genie’s already well out of the bottle and expanding—and there may be no pausing that.

The concerns raised by the letter, in general, are valid. The core of the signatories’ argument is captured in this line: “[R]ecent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one—not even their creators—can understand, predict, or reliably control.”

Throughout 2023, an AI arms race has ensued, as large, well-monied technology companies have raced to release new generative AI models and applications such as chatbots and image-creation tools. All the while, the outputs of these models are often highly creative and unpredictable and cannot be fully explained even by the people who created them (i.e. “Where in its training data did the chatbot get the idea for this response?”). With so much of their complicated inner workings hidden within an opaque black box, it’s difficult to detect when and how the models—which are trained on text and images created by humans—are injecting harmful biases they’ve learned into their output.

The signatories of the “AI pause” letter also fear that the models will create floods of misinformation, take away good jobs, and eventually “outnumber, outsmart, obsolete, and replace us.”

The open letter calls for a moratorium of at least six months on development of AI systems “more powerful than GPT-4.” If such a pause cannot be enacted quickly, the signatories write, governments should step in and institute a moratorium, it proposes.

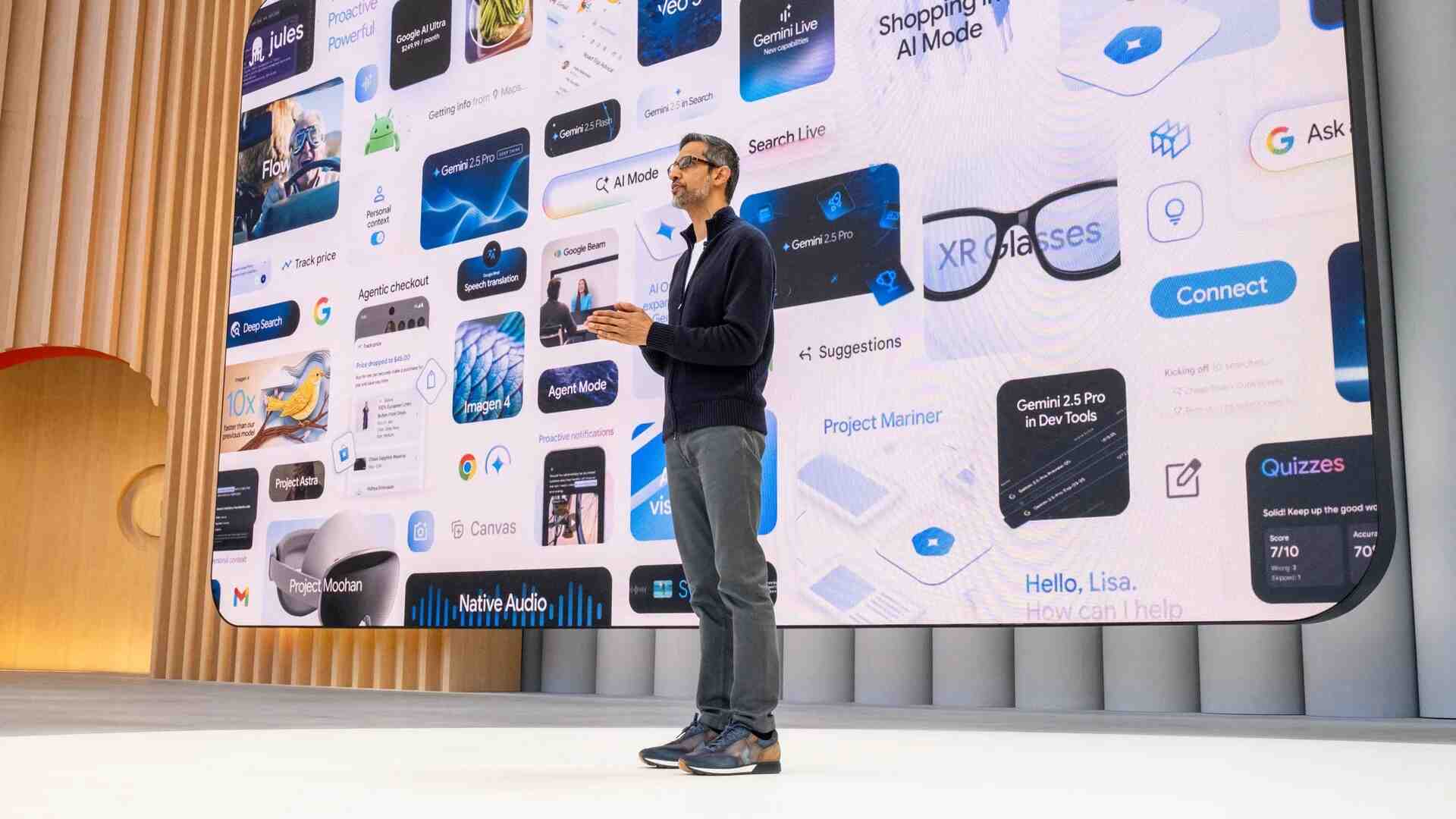

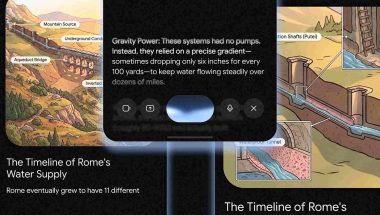

But, again, the genie’s out. OpenAI, for example, is already making its powerful GPT-4 model available to developers via an application programming interface (API), so they can embed it within their apps. It’s also begun allowing developers add plugins to its ChatGPT chatbot that call on both the developer’s proprietary knowledge (a travel company’s database of flights, for example) and OpenAI’s large language model (LLM) to generate specialized answers for users. And it’s within those public-facing apps and plugins that the danger may lie.

“The moratorium does nothing to address this—that’s what makes this not just counterproductive but just plain puzzling,” wrote Princeton computer science professor Arvind Narayanan on Twitter Wednesday.

More problematically, much of the research and development work happening in generative AI has already been made available through open-source models, which may further accelerate the spread of the technology without addressing safety concerns.

But just because the technology is available and popular doesn’t mean its too late to have a serious discussion about safety, says AI pioneer Yoshua Bengio, who signed the letter. “Is it too late to do something about climate?” he asks. Most would agree it’s not. “And yet we should have done something about it 30 years ago, and the more we wait the harder it is and the greater the extent of the damage.”

Bengio, who is a professor at the University of Montreal, says the current situation with AI also compares well with the risks of nuclear weapons. “There are global risks, and we need international coordination, and it’s not going to be easy, especially with the tensions that exist in the international political scene.” But, he says, the geopolitical tensions are a good reason for countries to work together to put guardrails around AI. As with nuclear weapons treaties, all parties can benefit.

A moratorium also assumes that the models of the future will get better at their core task (content generation), while getting worse at controlling biases in the models’ design and training data. Ex-Google CEO Eric Schmidt said during a recent Fast Company interview that improving the models, and reducing their harm, can be helped by releasing them to the public and opening them to the scrutiny of the scientific community. That’s why Schmidt thinks companies like OpenAI and Microsoft are acting ethically when Microsoft released its Bing Chat chatbot, despite the fact that it performed in unpredictable ways.

“This is how progress is made in society, you know; you put these things out and people play with them,” Schmidt said. “And if you look at when Microsoft did their version of Bing, they didn’t test it enough, and after a few days they had to restrict the number of sequential queries to five.” OpenAI also has been busy erecting safeguards around ChatGPT since its launch.

What Schmidt worries about, he said, are AI models that are released outside the public’s view, and released without the kind of restrictions Microsoft was quick to apply.

A number of experts said on Twitter Wednesday (the day the letter came out) that, in the end, the real beneficiaries of the letter and, perhaps, its moratorium, might be the well-monied AI companies it seeks to regulate.

“The year is 1440 and the Catholic Church has called for a 6 months moratorium on the use of the printing press and the movable type,” AI pioneer Yann LeCun tweeted (with little sarcasm) on Thursday. LeCun now leads AI research at Meta. “Imagine what could happen if commoners get access to books! They could read the Bible for themselves and society would be destroyed.”

The biggest problem with the letter’s proposal, perhaps, is that it relies on the U.S. government to define and enforce a moratorium. Members of Congress have heard the media chatter about ChatGPT and perhaps have played with the chatbot. But for most (not all), that is the limit of their knowledge of new generative AI technology. Asking Congress, which has struggled to even pass a broad privacy law for social media users, to jump into a hands-on regulatory role within a strange, new technology space may be wishful thinking.

“The government is very behind,” says General Catalyst CEO Hemant Taneja. “We need the AI talent that we have in the ecosystem and the workforce to actually go and help build that capability in the government.”

Taneja doesn’t believe a six-month pause in the development of new AI models will necessarily bring about the kind of risk mitigation the letter signatories want. “It’s very important that the focus isn’t about regulation that slows down innovation,” he says.

He says it’s preferable, and possible, to develop a regulatory framework in a way that preserve the U.S.’s current advantages over its geopolitical rivals. “The focus should be on regulation that scales the positive impact of innovation as quickly as possible in a way that gives us economic superiority, that takes care of all the stakeholders, and that minimizes unintended consequences.”