- | 9:00 am

OpenAI’s GPT-4 is so powerful that experts want to slam the brakes on generative AI

We can keep developing more and more powerful AI models, but should we? Experts aren’t so sure.

Elon Musk, Steve Wozniak, and more than 1,200 other founders and top research scientists have signed an open letter calling for a six-month pause on all giant AI experiments to get a better handle on the risks and benefits of the technology and its impact on the world.

The letter notes that powerful AI systems should be developed only after we’re confident that the effects will be positive and the risks manageable.

“Contemporary AI systems are now becoming human-competitive at general tasks,” the letter states, “and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders.”

In 2017, more than 5,000 people signed the Asilomar AI Principles, recognizing that advanced AI could represent a “profound change in the history of life on Earth.” That advanced AI is now here with the recent dawn of OpenAI’s GPT-4, but planning and management have been absent as global AI labs engage in an out-of-control race to develop and deploy “ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control,” the open letter states.

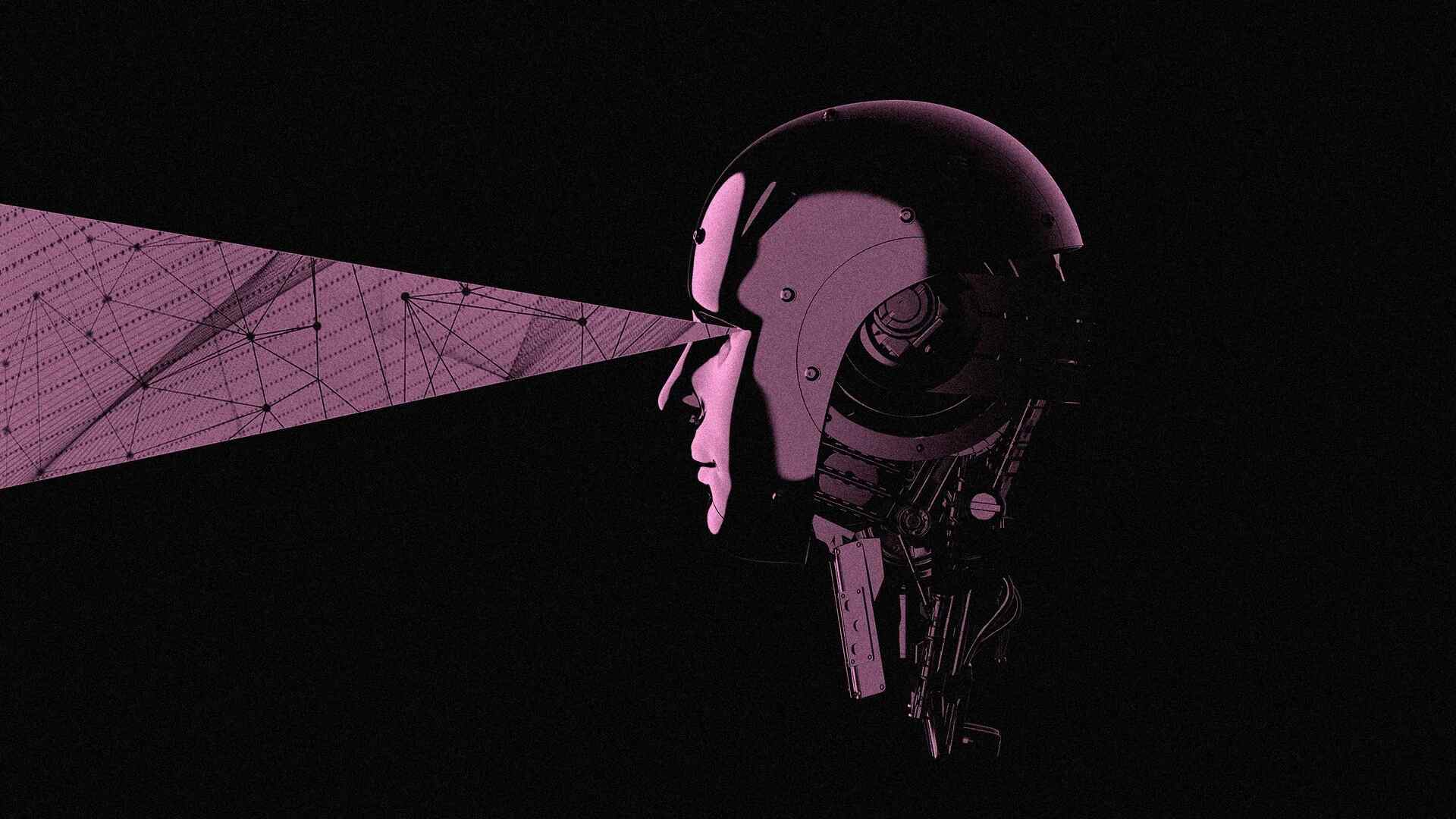

Gary Marcus, emeritus professor of psychology and neural science at New York University, has warned that AI may hit a Jurassic Park moment, referring to a famous line in the 1993 film in which Jeff Goldblum’s character warns, “Your scientists were so preoccupied with whether they could, they didn’t stop to think if they should.”

Marcus also warned that AI systems will become misinformation war machines, and there is no technology that protects us from the onslaught and “incredible tidal wave” of potential misinformation. Already, chatbots will write misinformation for you and cite nonexistent studies as evidence. Eventually, he said, we won’t be able to tell the difference between truth and lies.

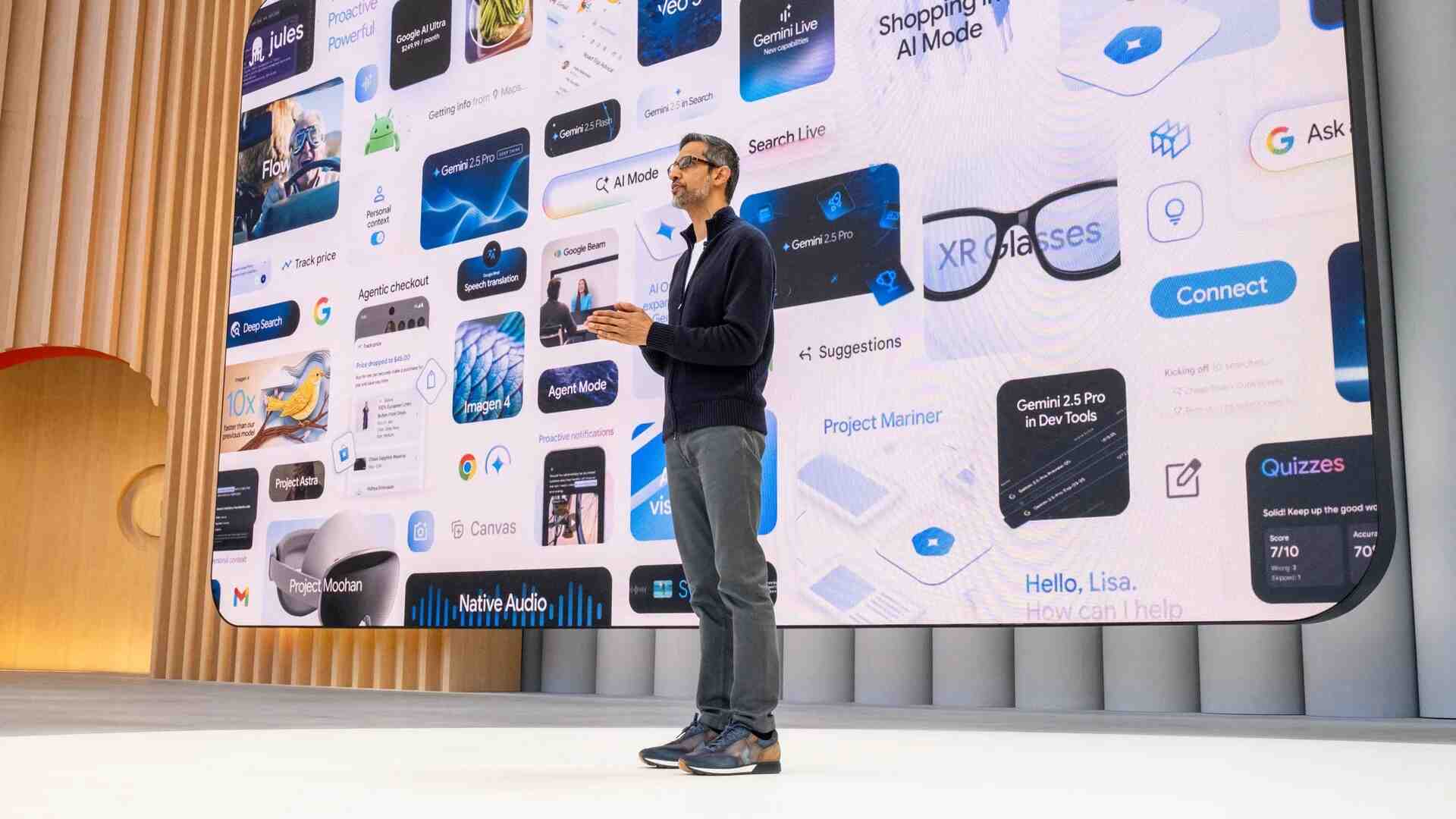

Prior to releasing GPT-4, OpenAI’s “red team” showed the dark side of the AI model by asking the bot to answer disturbing questions, such as how to murder people, build a bomb, and say antisemitic things. Already, at least six companies are building competing AI chatbots, the Information reports, and early last month, Google unveiled its own experimental conversational AI, Google Bard. Meanwhile, creators in China have begun using generative AI models to produce convincing “photographs” of bygone eras.

OpenAI’s own statement regarding artificial general intelligence acknowledged that, at some point, it will be time to limit the growth of new training models and get independent review before starting to train future systems.

“That point is now,” states this week’s open letter calling for the pause. “Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4 . . . If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.”

There are still questions to sort out, such as what exactly counts as more powerful than GPT-4, or even how would we know, given that no details of GPT-4’s architecture or training set have been published. But it’s a step in the right direction, says Marcus. “The spirit of the letter is one that I support,” writes Marcus in his blog. “Until we get a better handle on the risks and benefits, we should proceed with caution.”

We’ve reached out to OpenAI for comment and will update this post if we hear back.