- | 8:00 am

Generative AI tools are already changing the creativity landscape—and some artists aren’t happy about it

OpenAI, Midjourney, and Stable Diffusion are roiling every creative industry. Are generative AI tools a creator’s best friend or an existential threat?

At the end of October, Shutterstock, one of the leading stock image companies on the internet, announced it was partnering with OpenAI to launch a new tool that would integrate the generative-AI DALL-E 2 into its online marketplace.

The announcement was a huge win for the still-burgeoning AI industry, but was met with shock and outrage by creatives all over the internet, many of whom see generative AI like DALL-E 2 as an existential threat to their livelihoods and the use of their images for machine learning as a form of theft. “It’s disappointing to know we have so little respect and it’s worrying to now be competing with bots for sales,” an anonymous Shutterstock photographer told Artnet News in October.

So, now that the dust has settled, how is the company feeling about its OpenAI partnership? Is it still bullish on a future where machines are making our stock images for us?

“I think there are two choices in this world,” says Shutterstock CEO Paul Hennessy. “Be the blacksmiths that are saying, ‘Cars are going to put us out of the horse shoe making business,’ or be the technical leaders that bring people, maybe kicking and screaming, into the new world.”

Hennessy says that he’s not worried about DALL-E 2 replacing human labor, but, instead, sees generative AI as another tool Shutterstock is providing its customers, comparing it to when the site started offering vector art.

“It absolutely is not a replacement for what humans can do,” he says. “But it does have the opportunity to fill gaps that only exist within people’s imagination of what they can create.”

Right now, the three leading generative AI tools are DALL-E 2, Midjourney, and Stable Diffusion. Each have their own quirks and differences, but an early consensus is that DALL-E 2 is the best for hyper-realistic artwork and photography; Midjourney is best for fantastical or science fiction-tinged imagery; and Stable Diffusion is the most limited out-of-the-box of the three, but is also open source, which means it is also technically the most versatile. Stable Diffusion can even run off your laptop if it’s fast enough.

DALL-E 2, which will soon be incorporated into Shutterstock’s online marketplace, was first launched by OpenAI in April, and then fully opened to the public at the end of September. It was also the first generative AI to catch the internet’s attention in a big way. It seemed like one afternoon over the summer, all of a sudden, DALL-E 2 images were all over Twitter. And the AI industry hasn’t slowed down since.

Possibly because Shutterstock anticipated a less than warm reaction from photographers and artists, the company, in the same announcement, said that it would launch a creator fund that was meant to compensate the artists whose work was helping to better train the AI.

“We’re going to be doing a [revenue] share for any of the data that was actually provided to these companies and algorithms,” says Meghan Schoen, Shutterstock’s chief product officer. “So that we ensure that our contributor network is compensated.”

Schoen says that, going forward, every piece of content generated by an AI on Shutterstock will feed into what she calls a “payback cycle” for contributors that provided the imagery that fueled the AI’s creation. “It’s really important for us that we actually acknowledge the collective nature of this content,” she says.

Andy Baio, a well-known technologist who has been following the AI explosion closely on his blog Waxy, joked that this idea of only ethically-sourced data being used for machine learning is sometimes called “vegan datasets.” According to Baio, unlike other generative AI programs, OpenAI has actually avoided training DALL-E 2 on certain copyrighted material since the beginning.

“You cannot make Mickey Mouse with DALL-E 2 no matter how hard you try,” he pointed out. “It has never seen Mickey Mouse. it doesn’t know what Mickey Mouse looks like, you know? It has never seen Garfield or Captain America, or whatever.”

But the announcement of the partnership last month also confirmed what had long been suspected: OpenAI was training its artificial intelligence on existing images from across the website. Following Shutterstock’s announcement, The Verge reported that since 2021, the company has been selling images to OpenAI to help train DALL-E 2, which raised a few eyebrows on Twitter.

Schoen says that Shutterstock updated its terms of service when the company began helping OpenAI train DALL-E 2. “We were very candid from the outset as we were working through this and trying to figure out the best way to do this,” she tells me. “And have continued to message contributors throughout the process.”

But the source of DALL-E 2’s training data had been something of an open secret all along. Back in October, Baio noted on Twitter that the AI occasionally produces images that still include a garbled Getty or Shutterstock watermark on them. But there are huge concerns, especially from smaller artists and creators, that OpenAI is not just feeding artwork it purchased into DALL-E 2, but images from across the web.

OpenAI has said in the past that DALL-E 2’s dataset is monitored by humans and excludes images of self-harm or hateful content. Fast Company has reached out to OpenAI for comment.

“Culturally, it just doesn’t seem like it’s going away,” Baio says. “It may just be a thing that people have to live with. The only way to fight this would be in court, and artists don’t have those kinds of resources.”

Baio sees a lot of similarities between the conversation around AI art right now to the Napster years of music piracy. As generative AI becomes more popular, it may lead to court cases where AI companies have to show in court how their machines were trained. “That might lead to some sort of precedent,” he says. “In the late 90s, it certainly didn’t stop people from pirating, but it stopped corporations from running peer-to-peer apps. You couldn’t have [venture capitalists] funding MP3 sharing.”

But the debate between how to ethically create and deploy generative AI is not just happening in the world of graphic design and photography, but actually playing out across most creative industries right now. Some online creator platforms like Fur Affinity and InkBlot have banned generative-AI content completely. (Shutterstock has also technically banned selling generative AI, unless it’s being made through its DALL-E 2 interface, so it doesn’t taint the data that the company is feeding to DALL-E 2.)

DeviantArt, one of the longest-running online art platforms on the web, kicked off a wave of controversy this month after it announced that it was launching a new service called DreamUp, built on Stable Diffusion, which, similar to Shutterstock’s interface, will allow users to generate AI imagery with a simple text prompt.

Users were outraged, especially because when it was first announced, all images on DeviantArt were, by default, being used to train DreamUp, and users would have to individually uncheck each image to exclude it from DreamUp’s dataset. The company eventually reversed course and all images on the site are opted out of DreamUp by default. But it’s possible the damage is already done.

For many creatives, the level of distrust around how their work is influencing an AI is too high to ignore. One DeviantArt user from Chile who goes by aucaailin took to Twitter to condemn the way the site had forced them to let an AI scan their artwork.

Aucaailin, who has used DeviantArt since 2013, tells me that they had 1,044 posts on DeviantArt, but after hearing about the AI announcement, decided to deactivate their account. “If you want to make it ethical, you must pay, give credits with links to the original piece, to everyone,” they say.

Fast Company has reached out to DeviantArt for comment.

Schoen notes that Shutterstock will also allow creators to opt out from being part of DALL-E 2’s dataset going forward.

If this debate hasn’t hit your industry yet, it likely will—even if you’re not a creative. OpenAI has an AI that can write Excel commands. Though, interestingly enough, some industries are more hospitable to AI than others.

For instance, there are AI music tools gaining more prominence, such as Soundful, which has an algorithm that was trained on what the company claims are ethically-sourced, or “vegan” music samples and arrangements inputted by human producers, and can generate an entire song in a matter of seconds. There’s also MACE, which uses an artificial intelligence to generate unique individual drum samples.

There is also a fast-growing community of VFX YouTubers already creating exciting content with generative AI tools such as DALL-E 2, Midjourney, and Stable Diffusion, as well as more complex ones like Luma AI, which isn’t a generative AI, but instead uses a Neural Radiance Field, or NeRFs, to scan and create entire 3D spaces.

“Most companies that are doing generative modeling, they’re not thinking about artists, they’re not thinking about people who actually do these things,” says Amit Jain, cofounder and CEO of Luma AI.

Jain states that most AI tools are not being created with creators in mind, which may explain some of the animosity and distrust spreading across the web right now. He says there needs to be more tools to help artists begin working with what the AI has generated, which is in line with what Luma AI’s tool lets filmmakers do and may explain why so many are excited to use it.

Contrary to a generative AI like DALL-E 2, which scans images and then spits out a static image that matches its best approximation of what someone asked for via a text prompt, Luma isn’t scanning the web for videos to learn from. Instead, it works via original footage that you feed it. Luma then turns that into a 3D model, which you can then film inside as you would a real location. The program isn’t replacing a VFX artist, but, instead, allowing more filmmakers to create with VFX.

“Let’s say your goal is to go from zero to one, most of these tools take you from zero to .5,” Jain says. “You need to also give people tools to get there.”

The question, though, is that amid all the hype, institutional panic, and surreal memes, exactly how far can these AI companies go? Could they really replace a human graphic designer? Or an entire video production team? Well, what about an entire marketplace like Shutterstock?

In a sense, all technological progress is the story of one industry learning from, cannibalizing, and eventually replacing an older one, but never has it felt more direct than this. Are companies like Shutterstock or the studios that Jain says that he’s currently in talks with about Luma just letting a wolf into the hen house?

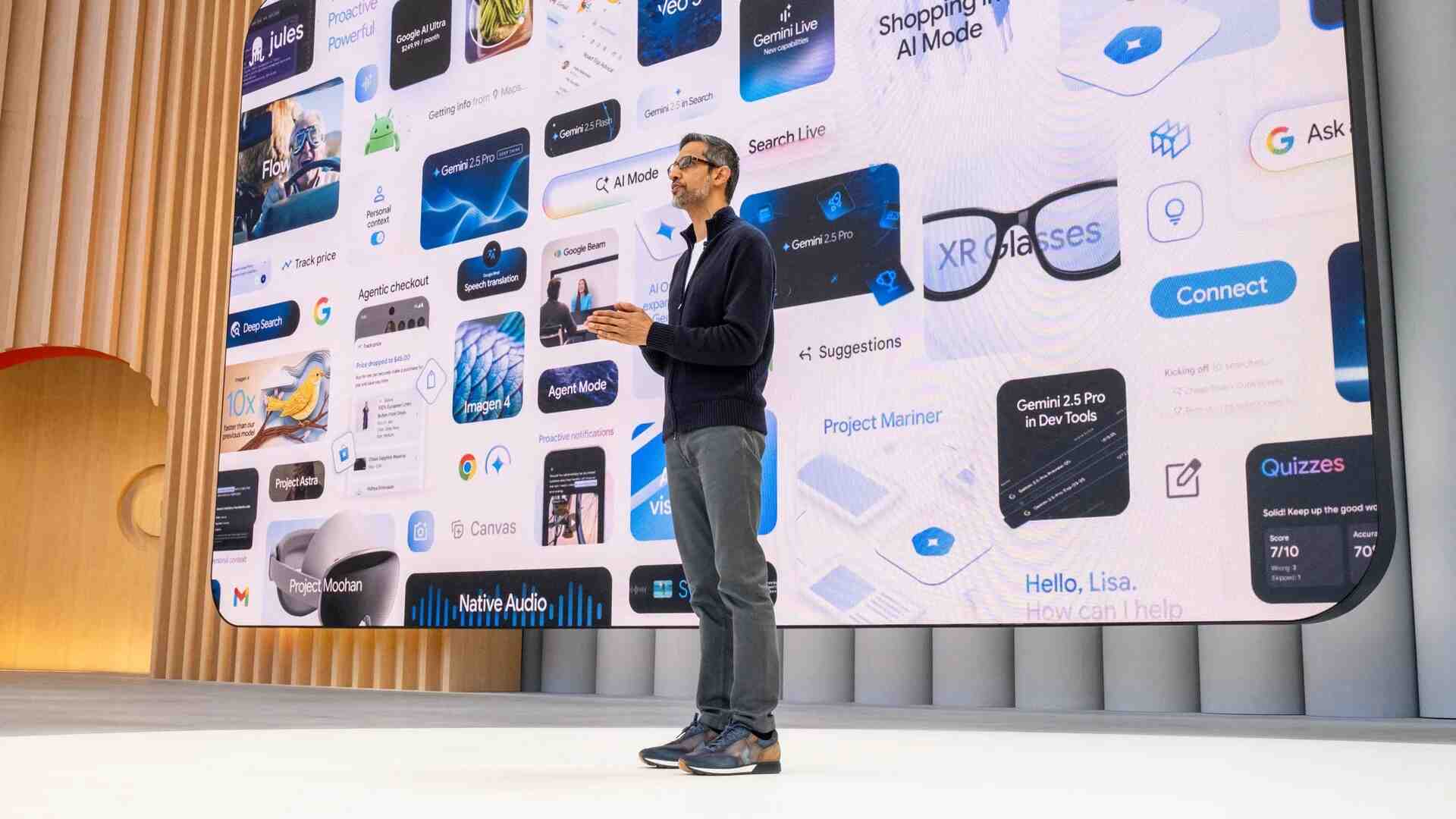

Shutterstock CEO Hennessy laughs at the idea that an AI company like OpenAI could eventually replace not just creators, but the whole marketplace as well. “What if OpenAI built everything that you’ve done,” he asks. “I’m like, ‘Okay, then maybe sometime down the road, they might be a competitor. I don’t know, with enough time, money, and resources, we too could go build Google, but they’ve got a pretty good head start right now. And that’s, that’s kind of the way I think about it.”