- | 3:00 pm

You can poison AI datasets for just $60, a new study shows

The training data used to build AI models’ knowledge could be damaged for the price of a good meal.

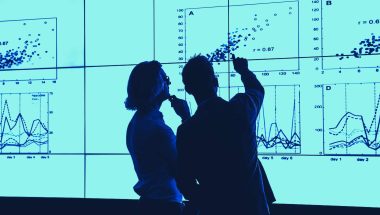

The rise of AI and machine learning models could revolutionize the way we work and live, with companies like Microsoft and Google putting huge amounts of effort and cash into integrating chat-based AI into search.

But the pursuit of knowledge could be susceptible to bad actors who wish to poison the well, according to a new paper in online repository arXiv by a collection of researchers—and it wouldn’t take much money to do so. In fact, just $60 of investment could help sway 0.01% of two key databases—the LAION-400M and COYO-700M datasets —used to train machine learning models, according to the study.

That’s because databases are at the core of AI’s supposed “intelligence”; while machine learning models don’t ever innately “know” anything, they “learn” in the same sort of way a child does by making linkages. And just as you could teach a child that the word for a chair is in fact “orange,” forever switching their perception of the world, so could you for AI.

“We’ve known for years that, in principle, if you could put in arbitrary, weird things into these training sets, you could get things to go very, very wrong,” says Florian Tramèr, coauthor of the paper and an academic at ETH Zurich in Switzerland. “And yet, this doesn’t really seem to have happened, ever. And we’re kind of wondering why that is.”

The researchers hit upon a reason for this luck that we haven’t seen AI weaponized yet: hindsight. AI wasn’t always known to be the future that we’d all use, but now it is—so it’s possible to go in and alter the training data for nefarious purposes. The academics did just that, by looking at the URLs of websites used in the training data for AI models (which is often publicly available in the documentation of the databases), the dragnet that moves across the internet to try and gather the collective sum of human knowledge then use it to formulate an AI “brain.”

They realized that while the AI may have once thought that a website contained totally innocuous content, the information on that site could subsequently change for future passes seeking training data. Better yet, many websites let their URLs expire, meaning they can be picked up for a low cost and repopulated with other content. “You are suddenly in a situation where you know exactly which data you should go and target for future machine learning models,” says Tramèr. “And you have an ability as an attacker to obtain control over this data for a small price.”

The researchers discovered that you could buy between 0.02% and 0.79% of the data used to train AI models with $10,000, and could get to alter 0.01% of the dataset in two key public databases for $60 of spending. That 0.01% is a key point, says Tramèr, because of prior research by two of his coauthors who found that it was possible to generate a backdoor that could deliberately misclassify images by altering the training data. In practice, this would mean you could present images of the Leaning Tower of Pisa and say they were the Eiffel Tower, forever warping the AI’s understanding of the world.

Tramèr and his colleagues also highlighted a vulnerability with Wikipedia, which is often used to train text-based AIs because its crowdsourced knowledge is perceived as informative. (Malicious editing of Wikipedia is difficult though, because of the volunteer editors trawling the site to spot any changes and correct them.) AI models are not currently allowed real-time access to Wikipedia, but the organization behind the encyclopedia does offer a twice-a-month snapshot of Wikipedia for download. By analyzing when that downloadable version is collated, it’s possible to insert deliberate edits that make it through to the data used to train machine learning models. “If you were to poison such an article, unless you’re very unlucky and the moderator comes and cleans things up right after you, it would be downloaded and included in the snapshot,” says Tramèr.

Not everyone is convinced that the methods outlined in the paper is one that would stand up to scrutiny in the real world, however. “The paper is not very surprising and I’m not sure there are many practical use cases for nefarious actors,” says Julian Togelius, associate professor at New York University, who researches AI. “What it essentially boils down to is that you can buy domain names and put your own stuff there, and that many datasets are more collections of links than anything else.”

Togelius admits that forking out $60 will get an attacker access to a number of URLs that could be used to inject ill-informed information into a set of AI training data. But he says that the amount of effort involved would likely outweigh the potential benefits. “The issue is more with the work that is required to find the relevant expired URLs, purchasing the domains, and preparing your own material in the right format to put there,” he says.