- | 8:00 am

Will AI wipe out humanity? It’s a story engineered to go viral

The AI annihilation narrative had all the right ingredients to push out more level-headed considerations of the pros and cons of the technology.

In mere months, the discourse around AI seems to have evolved from unbridled excitement over the latest shiny apps like ChatGPT and Midjourney to deep existential dread that the tech could bring Homo sapiens’ 300,000-year run to a premature end. AI has been developing rapidly, but did a popular chatbot and image generator really put us on a path to extinction?

We don’t know. There are many risks and harms stemming from the AI we have today, but the end of humanity is not one of them. What we do know is that this narrative of AI annihilation did not emerge naturally; it was engineered by two interrelated movements with slightly cultish tendencies, and it had all the right ingredients to go viral and push out more level-headed considerations of the pros and cons of AI.

THE EFFECTIVE ALTRUISTS

Effective Altruism (EA) is a philosophical and social movement oriented around using data and logic to reenvision the way philanthropy is conducted. It has resonated particularly strongly with some members of the tech community, as the emphasis on metrics and efficiency has much in common with the ethos of Silicon Valley. YouTube engineers obsess over the number of minutes users spend on the platform; effective altruists obsess over the number of deaths different philanthropic efforts can prevent. Don’t donate to your child’s Girl Scout troop or the research hospital trying to cure the rare disease afflicting your nephew; donate to causes like mosquito nets in Africa that have a much bigger life-saving bang for your buck.

While EA amassed a devoted membership throughout the 2010s, it was the summer of 2022 when the movement reached a crescendo of public attention. EA’s unofficial leader, or at least its poster boy—the youthful Oxford philosophy professor William MacAskill—wrote a hit book that earned him coverage by such magazines as The New Yorker and Wired. Meanwhile, the 30-year-old crypto king billionaire Sam Bankman-Fried had become another EA figurehead, as well as its largest funder (eclipsing EA’s other big-name donor, Facebook cofounder Dustin Moskovitz).

In particular, Bankman-Fried championed, with both his words and his money, an EA cause known as “AI safety.” While the word “safety” in many industries refers to reducing run-of-the-mill harms like side effects from medications and injuries/deaths from car crashes, the focus in AI safety has typically been much greater in scale. AI safety researchers often speak prophetically about how future AI will be so intelligent and superior to humans that we’ll be powerless to stop it when it decides it no longer needs us on this planet. And they portray themselves, almost biblically, as the saviors uniquely positioned to rescue us from this apocalyptic fate.

THE RATIONALISTS

This existential fear (and sci-fi trope) of AI has been a central preoccupation of another movement called Rationalism, which attempts to reason through life with logic and pseudo-mathematics in an effort to avoid the trappings of cognitive bias. While rationalist movements have cropped up at various times throughout history, the branch concerned with AI generally orbits around the writer-turned-prophet Eliezer Yudkowsky. He has been writing prolifically for many years on the ways that AI will kill us all, and why this is a near-certain outcome if we don’t stop developing AI. He recently broke through to a mainstream audience with a TED talk and a controversial op-ed in Time in which he advocates bombing data centers, if necessary, to enforce a global ban on AI development.

Yudkowsky’s reverence for numbers, especially subjective probabilities, and his efforts to divorce reason from emotion, can be quite off-putting to those outside the movement. Last year he suggested on Twitter that killing a baby under 1.5 years of age has a less than 50% chance of being murder because babies at that stage aren’t sufficiently developed mentally. He subsequently deleted that tweet; perhaps the king of the rationalists realized that publicly posting such an abhorrent opinion wasn’t, in fact, the most rational decision.

A CONVENIENT CAUSE

While Yudkowsky and the Rationalists led the initial charge on spreading the extinction alarm over AI, it grew into a priority cause for many Effective Altruists as well. One of the pillars of the “longtermist” branch of EA that MacAskill advocates in his recent book is that future lives matter as much as present lives. This leads to a questionable moral calculus in which “existential risks”—meaning things that could wipe us all out, even when exceedingly unlikely—take precedent over more probable but not species-ending harms.

Suppose there’s a one-in-a-million chance that aliens will land on Earth and kill all humans. Not only would that count as the death of the billions of us here at the time, but the longtermists would further include in the death toll all the trillions of future humans who would no longer come into existence. Tragic as that scenario is, should we really count it among much more probable risks like nuclear war killing hundreds of millions of us at some point this century?

A cynical take is that MacAskill’s book on longtermism would not have become a bestseller if its main conclusion was that, in fact, nuclear war presented our species’ biggest threat. For longtermism to be marketable, MacAskill needed a risk that’s neither totally obvious nor cartoonishly absurd. The extinction risk posed by AI that Yudkowsky had been arguing, and that many EA advocates had embraced, fit the need perfectly. In MacAskill’s many interviews and articles surrounding his summer 2022 book tour, existential risk posed by AI featured prominently. But catastrophe of another kind struck just a few months later: the implosion of FTX, and with it Bankman-Fried’s wealth and reputation.

Collateral damage from this fallout heavily besmirched the reputations of MacAskill, and EA more broadly—particularly when Bankman-Fried admitted that much of what he had been saying and advocating publicly was just a PR game. MacAskill had some serious egg on his face for recruiting Bankman-Fried into EA back when Bankman-Fried was an idealistic college student interested in animal rights, and encouraging him to go into a lucrative profession like crypto so he’d have more money to give to charity. We all know how that turned out.

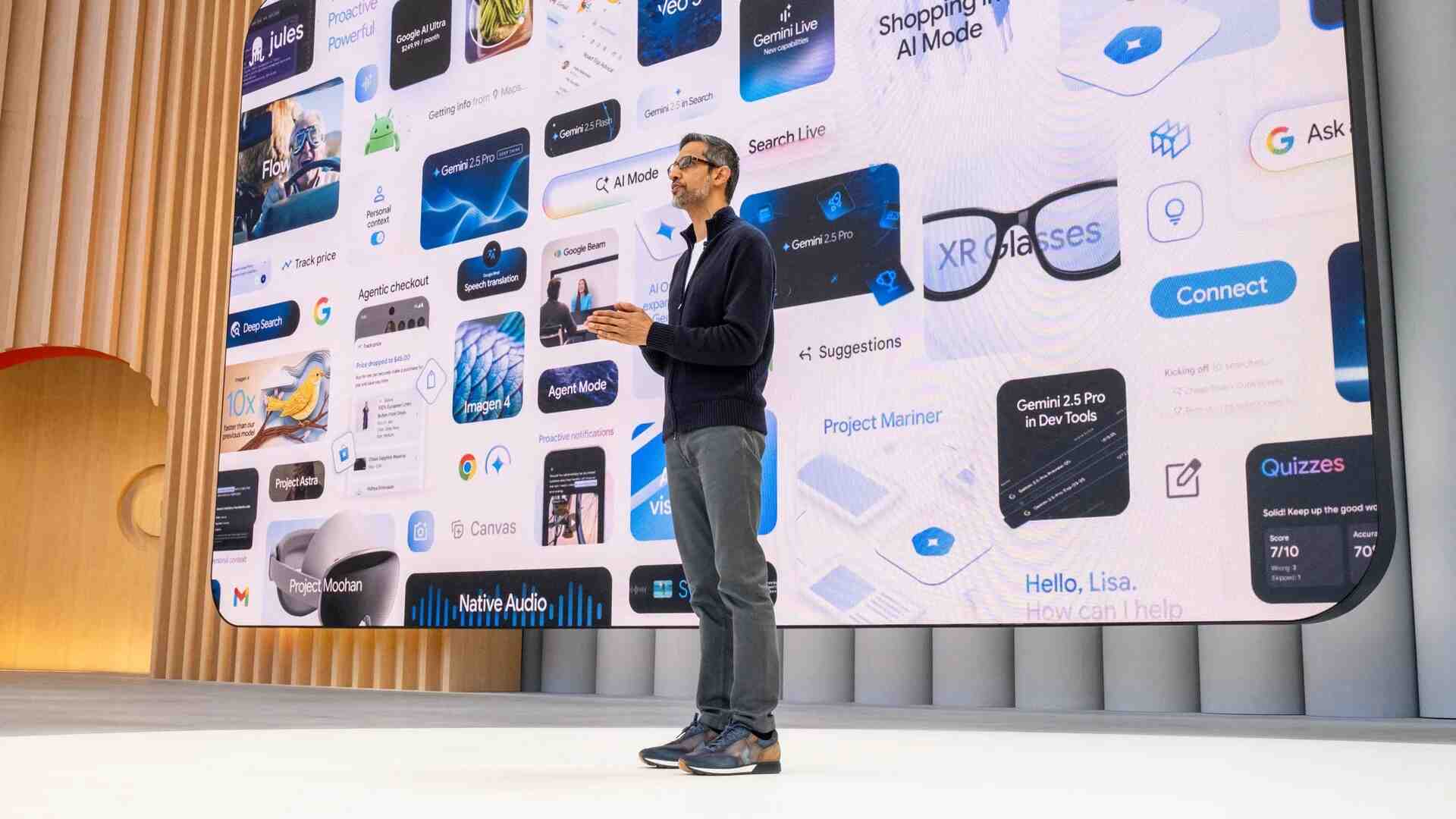

Right when the FTX debacle was unfolding, ChatGPT was released to the world and quickly became the fastest growing app of all time. This proved an incredible opportunity for MacAskill and the EA movement to regain some of the respect Bankman-Fried had cost them: the rapid progress in AI made palpable by this mysterious app that so many of us were suddenly toying with, and the anxiety it induced, set the stage for EAs to convince the world that AI really does pose an existential risk—and in doing so, to score a giant “I told you so.”

While I have no doubt that most EAs are earnestly alarmed by AI, I also believe they have been overly enthusiastic to peddle the extremely speculative AI doomsday narrative—and that personal and reputational motivations to steer nuanced conversations on AI toward sci-fi apocalypse have been too great a temptation to resist. Bankman-Fried spoke of how superintelligent AI could decide to kill us all, but he was revealed to be a total fraud; convincing the world that this risk is real, even though much of what Bankman-Fried said publicly was bullshit, was a clear path to EA redemption.

JUMPING ON THE BANDWAGON

The EAs have been on a well-funded mission to co-opt the AI discourse and persuade the public that their yearslong preoccupation with AI existential risk—something many have laughed at and used to illustrate how silly longtermism can be—is no joke.

An AI safety organization recently released a 22-word statement that “[m]itigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.” This statement collected a wide range of signatories, which helped it make a huge media splash. While on the surface this seems like much of the world uniting toward a common goal, there’s some fishy stuff going on here.

The organization responsible for this statement was funded primarily by EA, though you have to dig a bit to reveal this shadowy influence. The statement is carefully crafted to suggest a foregone conclusion that AI does pose an extinction risk, and that the question is whether we should prioritize it, even though many experts do not accept this premise to begin with.

It’s been argued eloquently that many tech CEOs who signed this statement have been eager to jump on the AI existential-risk bandwagon as doing so is good for marketing—it gives their often weak and flawed AI products an aura of godlike superpower—and it helpfully distracts from real harms. It’d be silly to worry about minor things like bias, disinformation, copyright infringement, etc., when there are many more serious problems—like AI infecting us with mind-control pathogens—right?

The media, both the traditional and the social kind, love all the drama of AI existential risk. You’ll get a lot more clicks with headlines about killer robots destroying humanity than with nuanced assessments of the pros and cons of AI. And the public loves stories like the hero scientist Geoffrey Hinton leaving Google so he can help save humanity from the Frankenstein’s monster he created; never mind that he happily raked in millions of dollars from his AI work while the brilliant scholars Timnit Gebru, Margaret Mitchell, and Meredith Whittaker were fired from Google for raising the alarm over AI harm.

While AI does pose many serious risks—and one day in the future, human extinction could conceivably be one such risk—for now, we should not lose sight of how the discourse on AI did not develop naturally without influence. Many of the loudest voices on AI have longstanding reasons to convince us that AI could spell our end and to position themselves as the saviors protecting us from this hypothetical AI apocalypse. We should listen to their arguments, but we should not overlook their incentives.